B2G/QA/Continuous Integration infrastructure

Transition from Jenkins to Taskcluster

Our continuous integration platform has grandly changed twice. Here are below the 3 stages of the transformations.

Live presentation: video and slides.

One Jenkins grid in the Mountain View lab

- URL: http://jenkins1.qa.scl3.mozilla.com/ (requires VPN access)

- Detailed presentation of it: video and slides

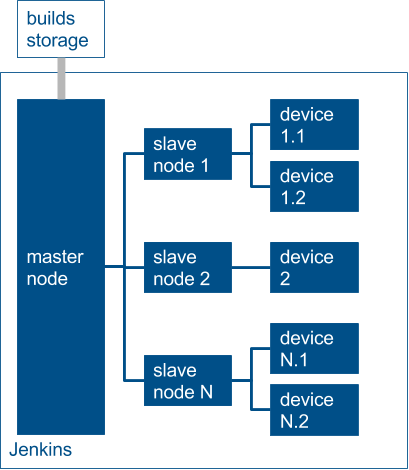

This starts like a typical Jenkins grid with a master node and many slave nodes (28, in our case). Each nodes has one or two devices plugged. The only differences between nodes lie in the devices: some have 1 or 2 sims, some have none. We labeled the nodes in regards to which project was using the device (UI automation, performance, bisection, monkey testing).

We had different type of jobs for functional testing:

- download, it retrieves a build and distribute it to the next jobs

- sanity, a small set run against the inbound branches

- smoke, run against the master builds (twice a day)

- dsds, the tests which verify the proper functioning when 2 SIMs are used

- api (aka "unit"), which verifies the gecko API used for setting up and tearing down work as intended.

- non-smoke, all the extra-tests that don't fit in the categories above

- imagecompare

Each of these jobs ran against 3 branches:

- mozilla-central => The central branch of gecko + the master branch of gaia

- mozilla-inbound => General Gecko patches come here before reaching master

- b2g-inbound => B2G-specific Gecko patches and Gaia land here first

We faced many scaling issues with this infrastructure:

- We didn't have 24-hour-support if a phone failed

- We were dependent of the radio-wave networks that at the office. Wi-Fi failed a couple of times, FM radio intermittently disappeared, text messages sometimes were never received

- The more device we got the more interference we had.

For these reasons, we decided to contract out a part of the lab.

Further reading

Having devices outside of the lab

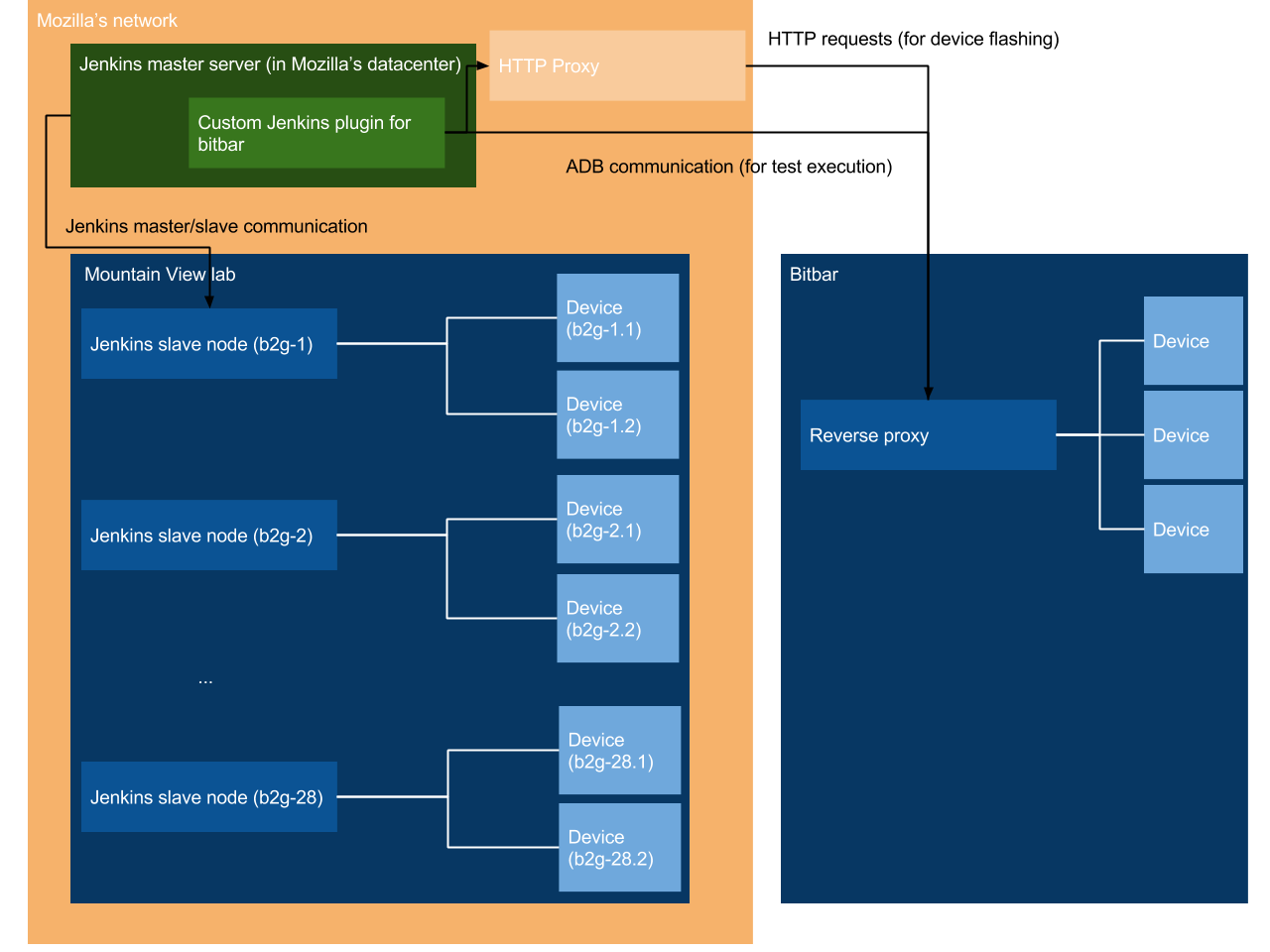

Bitbar hosted 50 of our devices, which were selectable and controllable from our Jenkins instance.

A Jenkins plugin enables remote flashing. It's in charge of finding an available device (depending on how many SIMs are needed), providing the expected configuration (RAM size, URL of the build to flash), and waiting until the flash is complete.

Then an ADB and a Marionette ports are exposed so the master node can execute tests as if the device was locally present. Logcats can be retrieved the same way.

Due to the duality of the infrastructure, the number of jobs doubled, and the mean time we also added new suites:

- brick

- dogfood

Both causes combined took us up to 90 jobs. They were all configured manually. Then, we sometimes had to update 10 jobs one after the other, just to change one parameter in the command line. Errors where a job didn't get update correctly happened a couple of times. Also, as the infrastructure grew, we had no way to publish changes for a teammate to review.

Another issue that became more visible: the outside world had no idea if a test was broken or could run, if nobody manually filed a bug.

There were many ways to address these issues (like creating scripts to update many jobs at once, or making the jobs reporting to Treeherder). Nonetheless, we chose what follows.

Putting the test runners into containers

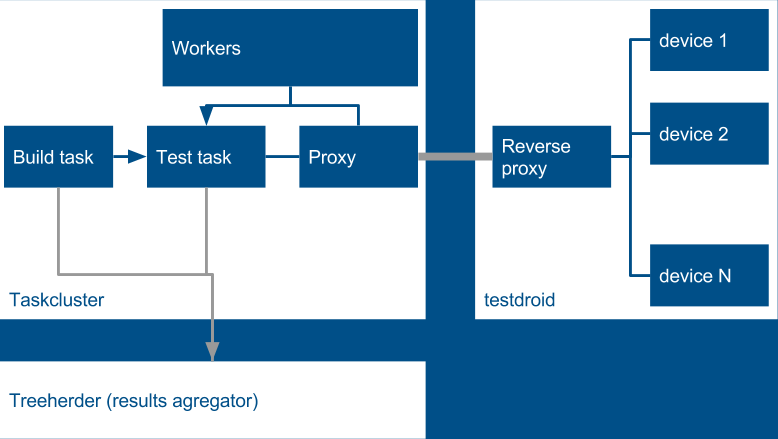

Taskcluster is the current way of making builds and running tests. It executes them in amazon-hosted instances where we run docker containers. Most of the architecture in the schema is standard with the rest of Taskcluster (see its documentation). However, some parts are specific to the Bitbar connection.

Like the rest of Taskcluster, our test task (which includes flashing) is created by a build one. It runs within a Docker container (you need to be in the taskclusterprivate group to see it). This container is owner of testvars.json, configuration files used by gaiatest.

A task is defined by its steps and configuration. This is defined within YML files that live in the Gecko repository (see below) We had 3 different type of tasks:

- The one which runs the regular end-to-end tests

- The other that executes the dsds tests (see first part)

- The last one which checks the gecko APIs

The common part is centralized in b2g_e2e_tests_base_definition.yml . What is specific to a given task can be found under $GECKO_REPO/testing/taskcluster/tasks/tests (for example: b2g_e2e_tests.yml).

Workers are meant to start tasks once pre-requisites are met. In our specific case, we have 3 instance of the same worker: one for each number of SIMs (1, 2 and 0). These workers regularly poll the testdroid reverse proxy to know how many devices are available. Once 1 is, the test task is awaken. This task will communicate through the proxy which is meant to centralize the authentication to bitbar's service. Hence, no task has knowledge of the credentials used. It uses the JavaScript client. This client has the same responsibilities as the Jenkins plugin (find a device available, flash it, wait until flash is done).

Like above, the test task talks to a device thanks to forwarded ADB/Marionette ports. Once the task is done, it reports to Treeherder, under these jobs. As you may notice, these jobs are only visible on the stage instance of Treeherder and running against b2g-inbound. The main explanation is that the jobs were still failing highly failing intermittently. We were working on making them stable, but this project has been sunset. For reference, see bug 1225457 to see what was planned.