Security/Sandbox/Hardening

Contents

- 1 Hardening the Firefox Security Sandbox

- 2 Security Model Review

- 2.1 File access

- 2.2 Network connectivity

- 2.3 Graphics

- 2.4 DOM

- 2.5 Script Execution

- 2.6 Web Content (HTML, CSS, XML, SVG)

- 2.7 Printing & Fonts

- 2.8 TLS Stack

- 2.9 DevTools

- 2.10 Addons

- 2.11 WebRTC

- 2.12 Media Playback

- 2.13 Firefox Preferences

- 2.14 Printing

- 2.15 Other things: Places, History & Bookmarks etc?

- 3 IPC Hardening

Hardening the Firefox Security Sandbox

Background

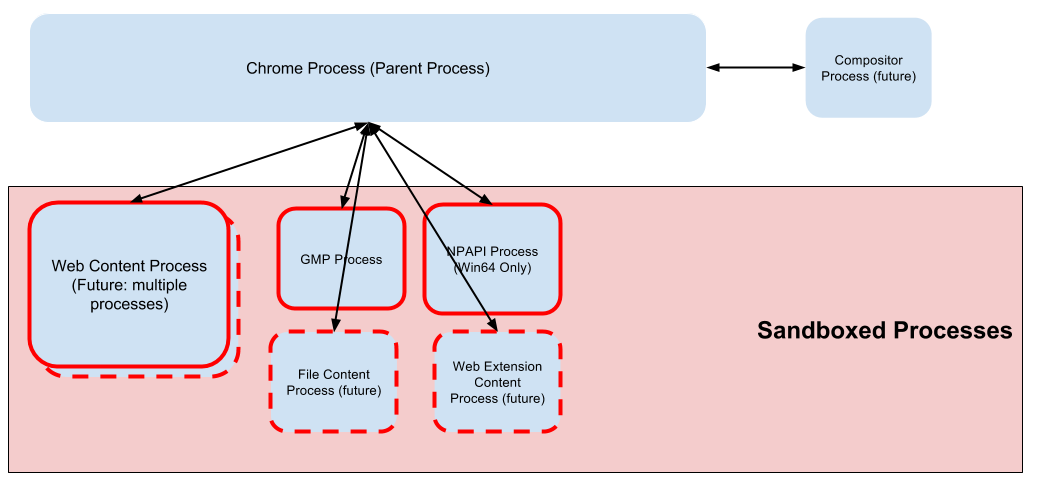

The basis of the Firefox security sandbox model is that web content is loaded in "Content process", separate from the trusted Firefox code which runs in the "Chrome process" (also called the "parent" process). Content processes execute in a sandbox which limits the system privileges so that if a malicious web page manages exploits a vulnerability to execute arbitrary code it will be unable to compromise the underlying OS.

The sandboxed child processes (red borders) include the content processes (web, file & extension) and several other child processes:

- Web Content process: parses and executes untrusted web content.

- File Content process: responsible for loading file:// URIs

- Web Extension Content Process: future process planned for loading web extension content. See [/WebExtensions/Implementing_APIs_out-of-process] for further detail.

- GMP process: highly restrictive sandbox running GMP plugins (eg. Widevine, Primetime, OpenH264)

- NPAPI Process: Flash sandbox on Win64

Further detail on the process model is can be found here: Security/Sandbox/Process_model.

The strength of the Sandbox as a security feature depends primarily on the restrictions of the Web Content process. Ideally web content should run completely sandboxed from the underlying operating system and not require access to:

- read or modify local files

- spawn new processes,

- access privileged OS functions and resources

- access restricted network resources.

The reality is more complicated as Firefox requires many of these privileges to run, and was not originally designed to be sandboxed and work is required to make it compatible. Initial support for sandboxing is available on all release versions of Firefox, and the next step is to harden the sandbox by tightening restrictions for content processes by moving or remoting sandbox-incompatible code to the parent. Detailed status of the sandbox implementation is tracked here: Security/Sandbox

Browser Hardening

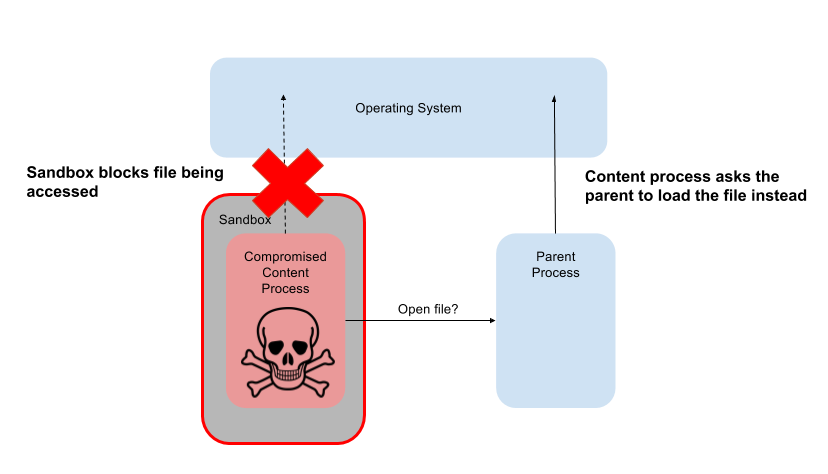

Once a restrictive sandbox is in place, attackers who manage to compromise Firefox will be prevented from accessing system resources directly. However in order to be considered a strong security boundary, Firefox needs to be hardened against privilege escalation.

The goal of hardening is to make the browser resilient, even when a content process is compromised. Having a strong sandbox in place is no use, if a weak trust model or IPC implementation flaw leads to trivial privilege escalation:

To harden the browser against this sort of sandbox bypass, several efforts are underway:

- Security Model Review: review of the design of Firefox components to ensure they enforce a strong security model

- IPC Hardening: auditing and fuzz testing IPC mechanisms used to communicate between content and chrome processes

Security Model Review

The reviews the sandbox security model with respect to the various parts of the browser. Each aims to spell out:

- Threats: specific threats associated with the browser component

- Objectives: the scope of the security goals which the sandbox aims to enforce (and what is excluded)

- Controls: the current restrictions that are enforced by the sandbox

- Open Issues: open questions about components, or known issues with sandboxing in this area

In the section below “content process” refers the “Web Content Process” unless otherwise specified.

File access

Threats

Compromised child process read or modifies user data or systems files. Arbitrary file write is equivalent to arbitrary code execution.

Objectives

Protect user data and sensitive configuration file in the event of a compromised content process. By default the sandbox should block access to files in the content process, and any necessary exceptions (e.g. reading program files, writing temp file etc) must be mediated by the Chrome process.

Controls

On all platforms, access to the filesystem is mediated by a file broker running the Chrome process.

For Web Content Processes there are limited exceptions to these restrictions:

- Write access to temporary files in whitelisted directories (required for WebRTC and other 3rd party-libraries running in the child)

- Indirect read access through the file broker is provided for accessing font, configuration files, reading devices e.g. urandom

- Add-ons can access their own directories through remote access (remote API access should enforce first line of defense, broker should enforce broad rules, e.g. /home/extensions & Firefox dir for JSMs.)

The File Content Process is used to load file:// URIs and as such it needs:

- Unrestricted read access to the local file system in order to load file:// URIs

- Remote content must never be loaded as the top level (remote content must load in the web content process)

- Documents loaded form file:// URIs can load remote content though (see issue 1 below).

- Otherwise the same exceptions as a web content process

The GMP process has no access to file system at all.

Open Issues

- The file content process is currently allowed to access remote content, and this is likely to remain as conceptually locally hosted webpages may legally request remote resources. A remote attacker able to coerce the browser to initiate the File Content process to load a nested resource such as iframe, would be able to bypass the file read restrictions of the Web Content Sandbox. We need to ensure that this is not possible.

- What is the file access policy for the WebExtension process? Can we increase restrictions of the content process sandbox post-depreciation of old-style addons?

Network connectivity

Network architecture is already such that low-level connectivity and security decisions are implemented in the parent process. For detail, see [Necko:_Electrolysis_design_and_subprojects]. This gives us a strong basis to enforce security rules on network traffic.

Threats

Attack local system services or connect to resources behind firewalls.

De-anonymise VPN users?

Objectives

- Direct network access is prohibited in the content process

- All network access is provided through the chrome process

- Global network restrictions (e.g. no connections to low numbered TCP ports) should be enforced in the Chrome process

Control

- Necko is remoted such that all access is performed through the content process

Open Questions

- Access to low ports could & should be restricted? Do we do this already?

- Does all network access go through the parent (via necko)? If not, what connection are directly initiated from the child?

- Where do origin checks occur? For example, what happens if a web resource redirects to a file:// resource?

- Where to network security checks happen in general?

- Can a compromised content process make network connections that subvert Firefox network settings (e.g.can sandbox prevent de-anonymization attacks)?

Graphics

In multi-process Firefox, drawing involves the content process parsing content, constructing and then drawing to a layer tree. When complete, the content process shares the layer tree with the parent via IPC. The layer tree references backing buffers which are shared with the parent either through shared memory or GPU memory.

The main consequence of this architecture is that the content process talks to the GPU directly, and subsequently needs access to low-level APIs to facilitate this communication. While there are plans to move compositing from the parent process to a separate GPU process (roughly FF53), the focus of this project is to improve stability and is not expected to meaningfully reduce the privileges required in the child process.

Out-of-process Compositor (aka GPU Process)

- Plan to move compositor from parent to separate process (roughly FF53)

- Largely would not affect sandboxing efforts, as child still requires access to the GPU. One benefit may be that on Windows, window handles (HWND) would not be required in the child process

- GPU process is not planned to be sandboxed

- Child processes allocate shared memory for texture data, write to it, and then pass it to the compositor via IPC. In the new compositor model, the compositor doesn’t need to manage shared memory, but it would be able to keep track of allocations per process and kill child processes using too much memory.

- GPU process will benefit linux greatly because it will move the X11 connection to the GPU process (instead of the child)

Threats

- Historically graphics system calls have been common privilege escalation path (e.g. GDI on windows)

- Access to X on linux is significant attack service and direct security threat

Objectives

- Reduce attack surface from content process

- Allow stronger windows sandbox levels by reducing necessary syscalls in content process

Controls

- tbd

Open Questions

- Communication with GPU limits the restrictions that can be placed on child process

- Windows: access to GPU prevents the use of the Untrusted integrity level sandbox

- OSX: To be investigated

- Linux: To be investigated

- IPC code (IPDL & shared memory) represents an attack surface which needs to be hardened to ensure resilience to privilege escalation attacks

- No plans yet to sandbox Compositor process

- Will Quantum render afford opportunities to limit attack surface (e.g. can we ban windows GDI usage in content process)?

DOM

Threats

- Privilege escalation through implementation bugs

- Broken security model allows sandbox bypass

Objectives

Dom API have chrome and content components. Need to ensure that security model for DOM APIs respects sandbox (ie all security decisions, permission checks etc must be performed in the parent).

Controls

- tbd

Open Questions

- Audit of DOM API IPC underway

Script Execution

Threats

- JS engine presents the large attack surface

Objectives

TDB

Controls

TDB

Open Question

- What JS code is executed in the Chrome process?

- Parent

- Firefox UI

- Chrome Scripts

- DOM APIs

- Add-on parent parts

- Child

- Frame scripts

- Frame scripts are associated with a browser tab and not with a page.

- Js implemented web apis

- Web content

- Frame scripts

- Parts of service workers are currently executed in the parent.

Web Content (HTML, CSS, XML, SVG)

Objective

All parsing and execution of untrusted content to be performed in the child

Controls

See bug https://bugzilla.mozilla.org/show_bug.cgi?id=1305331

Open Questions

Printing & Fonts

Printing is remoted to the parent to remove the need for content process to access OS printing functions directly (historically a dangerous attack surface). For this to occur, the content process serializes the document to the parent which interprets it.

Moz2d recording API to record draw instructions into a stream Shipped up in shared memory, page at time Played back in the parent

Objective

- Strategy is to trigger printing from the parent, not the child.

- How is data passed from child to parent? (shared memory, files? )

https://bugzilla.mozilla.org/show_bug.cgi?id=1228022 http://bgz.la/1205598 (Print preview doesn't honor Private Browsing Mode and writes to /tmp)

TLS Stack

Threats

- Attacking NSS code

- Putting fake certs/creds etc in the trust store

- Direct file access to steal keys e.g. client certs?

Objectives

- Content process should not be able to modify certificate database (add certs, exceptions etc)

Controls

- nsISiteSecurityService (security/manager/ssl/nsISiteSecurityService.idl) deals with HPKP/HSTS

- Underlying DataStorage checks to see if we are in the parent process before reading/writing, if in the child, asks parent to read/write, so for this we should be able to exclude the HPKP/HSTS database from read/write in child. Child needs to do this in order to alter entries based on headers.

- Access to the certificate database?

- Add/remove a cert

- nsICertOverrideService (security/manager/ssl/nsICertOverrideService.idl) allows to add certificate overrides to the database. Cert overrides should require user input, so should make sure no child can call this.

- No checking appears to be done on where it code is running

- The override file cert_override.txt is in the the user’s profile directory, and should be inaccessible to the child to reads.

- Add/remove a cert

Note: sandbox can not restrict access to web crypto (child can load any origin, so can load any origin’s data)

Open Questions

- Is PSM remoted securely? How are certificate exceptions handled?

- Check where the following:

- HSTS

- DoS

- Child can alter entries in HSTS cache. The child must in order to process headers. See above. The child probably doesn’t have to do this, but likely can.

- Key Pinning

- Pin a malicious certificate to bypass protection

- As above

- <keygen> happens in the child, going away hopefully?

- Client certificate UI?

DevTools

Open Questions

- What is the process model?

- What is in parent vs child?

- What IPC mechanisms

- Sockets etc

Addons

- WebExtensions will load in separate process

- Legacy & SDK Add-ons TBD

WebRTC

Threats

- Unauthorized camera and microphone access

- Eavesdropping on existing connections

- Raw socket connections

Objectives

Camera/Microphone

- Requests for camera and microphone mediated by the Chrome process

- Chrome code controls the prompt, which displays the origin of the requester to the user

- Content process can lie about its origin, but user still has to approve (i.e. content process can only use the camera without user permission, if it can guess an origin that has been pre-granted access)

- Camera/Microphone indicators must always be shown (content process shouldnt be able to hide them)

Network Connectivity

Controls

Camera/Microphone

https://bugzilla.mozilla.org/show_bug.cgi?id=1177242

Network Connectivity

Open Questions

- What other threats exist with WebRTC and the sandbox

- Socket Connectivity

Media Playback

Threats

- Mainly just attack surface for priv esc?

Objectives

- Support media playback while minimizing attack surface

- Remote audio to the parent to minimise syscalls necessary in the child (allowing tighter sandbox restrictions)

Controls

Open Questions

- Linux?

- Libcubeb - Audio interfacehttps://dxr.mozilla.org/mozilla-central/source/media/libcubeb/src/cubeb-internal.h#14

- Loaded in the child

- Strategy: remote the audio to the parent

- Mac

- CoreAudio

- Microphone

- Remove access from the child

- Access only via remote functions

Firefox Preferences

Threats

- Ability to change arbitrary preferences is equivalent to code execution

Objectives

- Restrict access to preferences in the content process to an absolute minimum

Controls

- Whitelist of prefs which child can write to

- Can only simple prefs

- Actual prefs live in parent

Open Questions

Printing

Threats

- System calls involved in printing is historically dangerous attack surface for kernel privilege escalation

Objectives

- Remote access to the parent to minimize access to the parent

Controls

Printing pages are remoted to the parent: the content process serializes the document to the parent which interprets it.

- Strategy is to trigger printing from the parent, not the child.

- How is data passed from child to parent? (shared memory, files? )

Other things: Places, History & Bookmarks etc?

Threats

- Implementation of the remoting of this functionality presents privilege escalation attack surfce

- Read bookmarks in content process

Objectives

- Content process should not be able to read bookmarks?

- We can’t restrict access to history since this is needed to display web content?

- Ensure security model for these components are safe

Controls

tbd

Open Questions

- What other things fall into the same category (is there a better name for this?)

IPC Hardening

IPDL

See bug https://bugzilla.mozilla.org/show_bug.cgi?id=1041862

MessageManager

https://bugzilla.mozilla.org/show_bug.cgi?id=1040184

Shmem: https://bugzilla.mozilla.org/show_bug.cgi?id=1232119 Files others?