MDN/Development/CompatibilityTables/Infrastructure

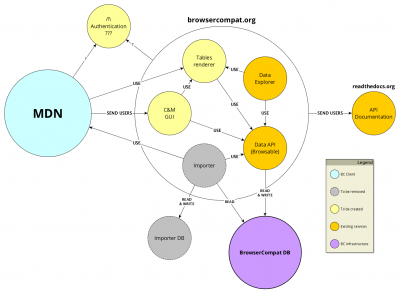

Here's a schema for the browsercompat.org services:

The BrowserCompat (bc) components are:

- MDN - primary consumer of compatibility data

- Data API - primary data data source

- BrowserCompat DB - stores current compatibility data

- Data Explorer - to browse compatibility data

- Importer - Scrapes data from MDN and injects into Data API

- Importer DB - stores extra import data

- C&M GUI - Contribution and moderation interface

- Table renderer - for MDN and other services

- Authentication - end-user authentication services

- API documentation - describes data schema, other parts

Development

A local development copy of MDN is not required for BC development. MDN is backed by the mozilla/kuma project. Development is done using a local VM that provides many of the production services. See the installation documentation for details.

The Data API is in the mdn/browsercompat project. It is a Django project. Developers will need to install a Python development environment, and optionally will need PostgreSQL, Memcached, and Redis installed. See the Installation documentation for details.

The BrowserCompat DB can be a PostgreSQL database (recommended) or a SQLite database. It requires the Data API to setup the schema and populate the data. Compatibility data is periodically exported to the mdn/browsercompat-data project, and can be used to populate a developer's database.

The Data Explorer is part of the mdn/browsercompat project code base, and requires a local install of the Data API for development.

The Importer is part of the mdn/browsercompat project code base, and requires a local install of the Data API for development.

The Importer DB is currently part of the BrowserCompat DB. Local development may require a lengthy scraping of the MDN site.

The C&M interface is backed by the mdn/browsercompat-cm project. It is running Ember.js, with the ember-cli project. Developers will need to install the dependencies, including Node.js, Bower, ember-cli, and PhantomJS. See the README.md for details.

The Table Renderer is still in planning, and the project has not started. A prototype renderer is implemented in KumaScript, in the EmbedCompatTable macro.

Local accounts are used for Authentication. For development, it is easiest to create local Django accounts backed by username and password. ./manage.py createsuperuser can be used to create a superuser account with access to the Django admin.

The API documentation is hosted at browsercompat.readthedoc.org, and sourced in the mdn/browsercompat project. Documentation developers will need to install the Data API to build documents locally, but won't need to populate it with compatibility data.

Current Testing and Deployment Process

MDN is a mature product, with many layers of testing:

- Developers can run unit tests against their own code (

./manage.py test) - Pull Requests are tested in TravisCI

- Each pull request is reviewed by another developer using GitHub's PR interface.

- When accepted, PRs are merged to the master branch.

- The master branch is periodically deployed by a developer to staging at https://developer.allizom.org. Deployment is controlled by a script in the repository, and usually does not include manual steps.

- Developers and staff perform targeted manual testing of new features on staging.

- Intern is used to run client-side tests against the staging server.

- When testing is complete, the code is deployed to production. Developers monitor the site for 1 hour after production pushes, to be aware of any performance degradation.

A single codebase is used for the Data API, Data Explorer, Importer, and API Documentation. This project includes testing:

- Developers can run

make testto run Django unit tests, andmake qato run code linters and coverage tests as well. - Developers can run

make test-integrationto run a local API instance, load it with sample data, and make API requests. - Pull requests are tested in TravisCI. This runs unit, QA, and integration tests.

- Some pull requests are code reviewed by other developers. Not all code is code reviewed, because 1) the API does not include personally identifiable information, 2) the service is not in production use outside of some beta testers, and 3) no other developers are currently familiar with the code.

- When ready, PRs are merged to the master branch

- When the master branch is updated, the documentation is rebuilt and deployed to https://browsercompat.readthedocs.org

- The master branch is tested in TravisCI again.

- When testing is successful, the code is automatically deployed to https://browsercompat.herokuapp.com

Heroku hosts the BrowserCompat DB and the Importer DB in a single RDS PostgreSQL database. When the data schema changes, database migrations are run using the command-line heroku client (heroku run --app browsercompat ./manage.py migrate)

The C&M GUI is in early development. Developers can run tests with ember test. No tests are automatically run on push.

Authentication is done against https://browsercompat.herokuapp.com, and is not specifically tested. Firefox Accounts was integrated, but has since broken.

The KumaScript-based table renderer is manually tested, and requires manually refreshing MDN pages when data changes. Stephanie Hobson has a test page linking to interesting pages.

Current Infrastructure

MDN has a mature infrastructure, maintained in a data center. It include load balancers, databases, search servers, admin nodes, and worker nodes. It also has a parallel staging infrastructure. The interface between MDN and the BrowserCompat services is over HTTP, so the MDN infrastructure does not matter to the BrowserCompat services, or vice-versa.

Many components run in Heroku at https://browsercompat.herokuapp.com:

- Data API: https://browsercompat.herokuapp.com/api/v2

- Data Explorer: https://browsercompat.herokuapp.com/browse/

- Importer: https://browsercompat.herokuapp.com/importer/

- Authentication: https://browsercompat.herokuapp.com/accounts/login/

The websites run on a Hobby Dyno, and async tasks run on a second Hobby Dyno. Add-ons are used for Memcache and Redis services, and a PostgreSQL add-on hosts both the BrowserCompat DB and the Importer DB.

The C&M GUI is currently not deployed.

The Tables renderer doesn't exist yet.

API Documentation is hosted at http://browsercompat.readthedocs.org/en/latest/

On The Way To Production

Several changes will be made in the months leading up to production.

The API will switch to UUIDs. This will support data import and export, and reduce or eliminate the need for production database dumps for development or staging.

The C&M GUI will continue to be developed, and make the current Data Explorer obsolete.

A caching load balancer will be added in front of Data API web workers, along with the code changes to support it. This will allow the API to efficiency server many similar requests.

A CDN will be used to host assets, including possible the entire C&M GUI.

MDN will add an OAuth2 provider interface, to allow BrowserCompat users to use their MDN account on BrowserCompat sites. This may become the only way to authenticate with BrowserCompat.

Load testing will be developed, to test the infrastructure under expected production loads.

A deployment tool like Jenkins will be configured to run tests and deploy services to production.

MDN contribution data will be cleaned up and imported into the API. The number of MDN pages displaying API-backed data will increase, until all pages are backed by the API. The API backed tables will be turned on for all users, and the legacy wiki tables removed.

Production Services

Note: this is the proposed infrastructure, and hasn't been reviewed by operations or QA

MDN is planning to move to an AWS-based infrastructure in Q1/Q2 2016.

BrowserCompat will be deployed as a set of small services hosted on subdomains of browsercompat.org:

- https://browsercompat.org will redirect to https://www.browsercompat.org

- https://www.browsercompat.org - the Data Explorer and C&M GUI' caching load balancer (CloudFront / ELB)

- https://api.browsercompat.org - the Data API caching load balancer (CloudFront+ELB or S3)

- https://importer.browsercompat.org - the Importer server, retired after import is complete. (Heroku/Deis)

- https://integrate.browsercompat.org - the Table Renderer server / load balancer (Cloudfront+ELB)

- https://www.cdn.browsercompat.org - assets for the C&M GUI interface (S3)

- https://api.cdn.browsercompat.org - assets for the Data API server (S3)

- https://admin.browsercompat.org - deployment coordinator (EC2)

- https://qa.browsercompat.org - Jenkins server for testing and deployment (EC2)

Backend services will not be publicly available, except possibly over a VPN or IP limited to admins:

- 2x PostgreSQL databases for BrowserCompat DB and Importer DB (primary/replica) (RDS/EC2)

- 2x web worker for the C&M GUI (if needed) (Heroku/Deis)

- 3x web worker for the Data API (Heroku/Deis)

- 2x web worker for the Table Renderer (Heroku/Deis)

- 2x task worker for the Data API (Heroku/Deis)

- 2x caching server (Redis, Heroku plugin/ElasticCache)

- 1x broker server (Redis, Heroku plugin/ElasticCache)

A parallel infrastructure will be used for staging:

- https://www.stage.browsercompat.org - the Data Explorer and C&M GUI server / load balancer

- https://api.stage.browsercompat.org - the Data API server / load balancer

- https://importer.stage.browsercompat.org - the Importer server, retired after import is complete.

- https://integrate.stage.browsercompat.org - the Table Renderer server / load balancer

- https://www.stage.cdn.browsercompat.org - assets for the C&M GUI interface

- https://api.stage.cdn.browsercompat.org - assets for the Data API server

Staging will have parallel backend servers, but without the same redundancy:

- 1x PostgreSQL databases for BrowserCompat DB and Importer DB

- 1x web worker for the C&M GUI (if needed) (Heroku/Deis)

- 1x web worker for the Data API (Heroku/Deis)

- 1x web worker for the Table Renderer (Heroku/Deis)

- 1x task worker for the Data API (Heroku/Deis)

- 1x caching + broker server (Redis, Heroku plugin/ElasticCache)

When needed, some or all of a load testing infrastructure will be deployed:

- https://www.load.browsercompat.org - the Data Explorer and C&M GUI server / load balancer

- https://api.load.browsercompat.org - the Data API server / load balancer

- https://importer.load.browsercompat.org - the Importer server, retired after import is complete.

- https://integrate.load.browsercompat.org - the Table Renderer server / load balancer

- https://www.load.cdn.browsercompat.org - assets for the C&M GUI interface

- https://api.load.cdn.browsercompat.org - assets for the Data API server

as well as backends appropriate for the load test, and as many load testing servers as needed.

Sample Request Load

MDN had 13 million page views in December 2016. The BrowserCompat infrastructure needs to be able to handle a similar load. 1 million daily requests is a good launch target. Here's a walkthrough of a "cold cache" request to BrowserCompat.

A user loads https://developer.mozilla.org/en-US/docs/Web/CSS/display. This contains specification and compatibility data that is sourced from the API, and was current at the time it was saved. In-page JavaScript requests up-to-date data from the Table Renderer service.

The request to the Table Renderer includes a locale ('en-US'), the target feature (a UUID for "Web/CSS/Display"), a cache header (ETag), and the format type (for display on MDN). In the "warm cache" case, the load balancer knows the content is up to date, and returns a 304 Not Modified response. If there is a cache miss, a Table Renderer web worker is called.

The Table Render makes a similar call to the Data API, asking for the table data for Web/CSS/Display by UUID. Again, there is a chance the caching load balancer has this data, and can serve it without communicating with the backend Data API workers.

The compatibility and specification data for "Web/CSS/Display" is spread over 100+ resources of 8 types (Browsers, Versions, Features, etc.). The Data API maintains a resource cache to avoid requesting this data from the database. If the instance cache is warm, then there are no database requests to load this data. For the cold cache case, each resource is loaded from the database and stored in the instance cache.

The instances are combined into a single JSON response, which is hashed with an ETag. This response has all the known localizations for the data. This is cached by the load balancer and returned to the Table Renderer.

The Table Renderer constructs HTML fragments of a table representing the compatibility data, localized as requested. This is cached by the load balancer and returned to the in-page JavaScript on MDN. The in-page JavaScript replaces the stale compatibility table with the new table.

The Data Explorer also uses the Data API, and the caching load balancer and instance cache also protect against repetitive data requests.

A minority of users also contribute data to MDN. There are a few hundred MDN changes in a day, and the BrowserCompat interfaces should support a similar number.

These users are directed to the C&M GUI, which talks directly to the Data API to read, update, add, and delete resources. The C&M GUI is in in-page JavaScript application, and all resources can be stored on a CDN. Changing a resource invalidates the instance cache, so that in-page JS will eventually see the new data. A forced cache refresh is used to ensure the contributor sees their contribution on MDN.

It will also be possible for users to access the Data API directly, for example to programmatically update many items. We don't expect much load from this usage in the first few years after launch.

Load Testing

Given the above request pattern, it should be possible to simulate production loads and test the response of the BrowserCompat system.

In an integrated test, the entire infrastructure is duplicated in a staging environment, and loaded with production data. Load testers request random compatibility tables from the Table Renderer from a list of locales and MDN pages, which can be assembled from the bulk data export. Tests can be against a code cache, or "warm" the cache by requesting each locale and page combination first. Different combinations can be tested, to determine the number and capability of each server needed to meet the projected load. Using a tool like New Relic may help pinpoint the components that could use optimization.

Similar testing can be used to determine the throughput of each component. A Table Renderer web worker can talk to a fake Data API and construct the same table as many times as it can in a minute. A Data API web worker can handle the same request over and over again, with or without an instance cache.