EngineeringProductivity/Meetings/2012-01-09

The Overview

Bugzilla

dkl, glob:

- Holidays for all (glob was out for the week between xmas and new years)

- 4.2rc1 has been released (as well as some minor stable releases with security fixes)

- 4.2rc1 means 4.2 final not too far off (hopefully a month or so)

- dkl is new Release Manager for upstream Bugzilla Project (mkanat has passed it along)

- BMO updated to 4.0.3 stable release.

- More work on performance enhancements for bugzilla

- SQL fixed that stored old bug query lists which could slow down display of show_bug.cgi significantly

- Some more work to further refine show_bug.cgi with regard to flags.

- Working with IT to get the RAM upgraded in the PHX BMO servers.

- Once the new ram is installed, glob working with IT to tweak settings in mod_perl to take advantage of the extra ram by keeping httpd children around longer before being killed off.

- Found issue where some bug email was being queued but never sent due to error. Mail should be flowing and some may notice old dated emails.

- Creating conversation with Sheeri (new MySQL DBA) to take a look at BMO databases and work on improving performance.

- Various other fixes/improvements as well as administrative tasks (products/components/etc.)

Bughunter

jeads:

Added a tree representation to help visualize platform distribution data for Crashes, Assertions, and Valgrinds.

Added the mozilla/HTML5 based context menu

Added infrastructure for help documentation along with initial documentation.

Added Bugzilla/crash-stats search ability

bc:

Reviews and testing patches

Worked on alleviating recent X server crashes on Linux which cause crash worker to terminate.

Investigating work arounds for recent Fedora changes which make gstreamer plugin helpers blocking which hang crash workers.

Keeping up with current crash bugs.

ctalbert:

Bughunter Training Feb 9, 10 Noon in Mountain View

Eideticker

ctalbert:

Will (wlach) verified that a checkerboarding fix reduced the amount we were checkerboarding using eideticker: http://masalalabs.ca/2012/01/measuring-reduced-checkerboarding-in-mobile-fennec/

We are setting up a meeting with the interested folks to talk about getting a mountain view based eideticker machine set up and deciding on what is needed to do in order to make that a reliable resource for developers to perform these UI performance analysis.

wlach:

I've been working on over the last while has been the web interface, most of my recent improvements are documented in this blog post:

http://masalalabs.ca/2011/12/year-end-eideticker-update/

I've also been working a bit on making it easier to write basic web services using the mozhttpd module in mozbase, which should be useful for Eideticker, Talos, and probably a whole bunch of other similar projects.

Marionette

mdas:

Since the last meeting, Marionette was fixed up to work with desktop Firefox, and now supports everything that had been supported in the mobile environment. Mobile support has been dropped since birch was merged into nightlies, since we had remote-debugger problems with it.

Navigation commands have been completed for desktop environment (goBack, goForward, etc). Marionette now has a webserver as well, so you can serve your own html pages and test them. We're moving on to implementing context management support (get a window, switch to window, etc.).

We now have an EC2 build machine, to test B2G builds. It is running here http://builder.boot2gecko.org/. We're discussing with Andreas to get another machine to run marionette tests.

B2G team needs some support for running tests on HTML pages in the b2g environment(to test the gaia interface), and we're currently investigating what needs to be done to help them run them.

Marionette is now merged into the b2g repo! So all b2g builds will have the marionette code available to them.

Mobile Automation

jmaher:

tbpl automation:

- turned on talos for xul fennec, tpan is perma red

- waiting on releng to turn on reftests/crashtests and robocop

ctalbert:

I have a few things on the Cross-browser startup test automation.

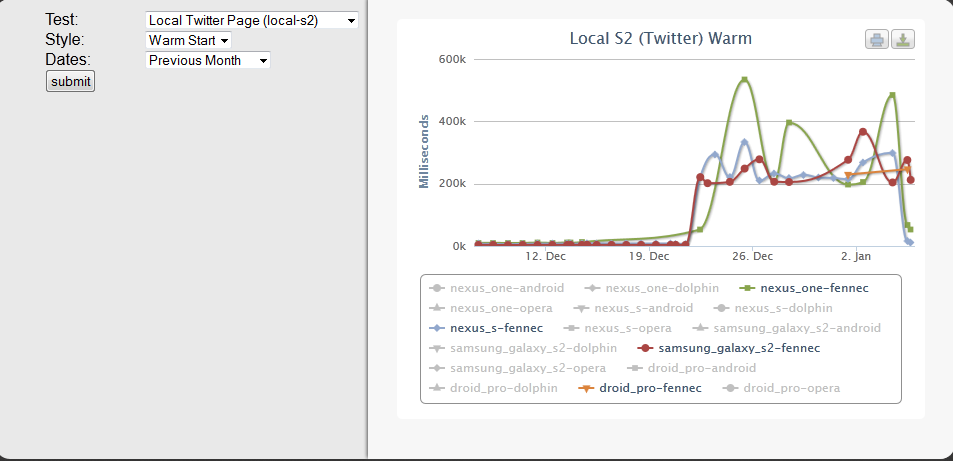

I noticed last week that every phone had fallen over on December 21st. I tried to figure out why and realized that we weren't updating the Fennec build after december 21st. Bob Moss stepped in and we got that fixed. Once that was fixed though, I noticed that we weren't getting all our results into the database from the automation, and digging into that I found that there has been a massive regression in the fennec "onload" handler time. These graphs measure how long it takes for fennec to bring up a site and fire the onload handler. You can see the regression in the attached graphic. Still trying to see if this is expected, or if it is something in the automation going sideways.

Update Brad and Clint figured out it was a bug that was fixed in Fennec thus causing fennec to actually load the last visited page. Because these tests make their measurement when the "onload" handler is fired, fennec started measuring from the wrong "starttime" which caused the graph you see above. Ctalbert working on a fix and removing the offending data so that it is working again.

Mozbase

- We have three active contributors sending patches in for mozbase! They rock.

- Special thanks to BYK, ckvk, and chmanchester.

- Jeff got us autobot automation - it now runs tests everytime you land a change in mozbase.

Peptest

ctalbert:

Current state is that we landed all the blockers that had prevented us from turning on the peptest framework in tryserver. We are currently in a holding pattern waiting for aki to come back to stage the last change that should make it live on try server.

Mark also did some peptest analysis with one of the GC-heavy sites to get responsiveness numbers during a GC pause.

Robocop

jmaher:

Robcop has landed all original development patches. Work is in progress to get it turned on in release engineering. Mobile developers are writing new patches to refactor and improve tests already! Pending patches are left to make the logging mochitest or talos compatible.

Tegra Pool

jhammel:

No updates.

Talos

jmaher:

So talos has a lot going on, especially since the last meeting:

Xperf talos-

* is sort of a project that is not actively being worked on * :Yoric has patches to add custom Mozilla xperf providers, those still need to land * Next steps are to work with Yoric to figure out if we want to measure those in talos

AMO Performance-

* finished using the new AMO API and fixing the platform and os values * waiting on AMO to finish testing and give me the thumbs up * remaining work is to switch databases to a production database and provide production and staging environment

Jetpack Performance-

* scoping out the project: https://wiki.mozilla.org/Auto-tools/Projects/JetPerf

Signal from Noise-

* created wiki and general plan: https://wiki.mozilla.org/Auto-tools/Projects/Signal_From_Noise * started a few brainstorming meetings to figure out the plan * working on dropping the first value in the talos data set vs the maximum value (https://bugzilla.mozilla.org/show_bug.cgi?id=710484) * next steps are to have a kick off meeting with releng, webdev and ateam

Other:

* Lots of work towards installation and using mozbase * patches from 3 different community members * osx rss collection after each page: https://bugzilla.mozilla.org/show_bug.cgi?id=693404

Is that fun stuff or what!

Speedtests

mcote:

Will try to get the V8 test working for the JS team, at some point.

Mozmill

jmaher:

patch from Chris, a new community member!, updates to mozhttpd and mozdevice are plentiful:)

jhammel:

mozmill is pretty much waiting indefinitely for QA to start adopting mozmill 2., but mozbase work is very active

WebRTC

ted:

The WebRTC work is coming along pretty well. I've got the GYP->Makefile generator working on Linux/Mac, and I've started working on Windows support.

The relevant bugs are: https://bugzilla.mozilla.org/show_bug.cgi?id=643692 https://bugzilla.mozilla.org/show_bug.cgi?id=688187

War On Orange

mcote:

emails are now also posted to the dev.tree-management newsgroup (they used to be only going to the mailing list).

flyingtanks as a staging server

mcote:

I've made a bunch of improvements and bugfixes to templeton. IT is in the process of opening up access to the ES db to flyingtanks, at which point I'll stage a (templeton-compatible) copy of OrangeFactor.

Other

mcote:

I finished the first draft of the Automated Testing Guide.

Upcoming Events

All times Pacific Time, click on link to find your timezone

- Platform Meeting (all platform engineers) Tuesday at 11 AM, Conf # 8605.

- Mobile Test Automation Wednesday at 10:30 AM #304

- Marionette/Eideticker Meeting Thursday at 10 AM Conf # 304

- Project Snappy Meeting Thursday at 11 AM Conf # 95346

Bughunter Training

February 9-10 in Mountain View

April All Hands

April 16-20 in San Francisco

- Get your travel booked, add yourself to the wiki

- Ashley will book your hotels

- Plan to arrive in time for the 10AM monday meeting. Leaving on Friday is OK.

- Food

- We can have breakfast/lunches catered.

- Ctalbert's proposal:

- Breakfast each day

- Lunch on Monday (whole office lunch after monday meeting)

- Lunch on Tuesday

- No catered lunch on weds - we can fend for ourselves

- Thursday, no catered lunch, but we'll make an excursion to the weekly farmer's market/food stalls at the Ferry building, it's a fun event.

- Wednesday night we'll have a nice team dinner somewhere in the city

Round Table

- Update on SF workweek logistics (see above)

- Moving machines onto the PHX bughunter cluster

- how many do we need

- what's our capacity

- what are the steps involved?

Q1 Goals

- We'll discuss them