Networking/Archive/DASH/Implementation

Work in progress

This design page for DASH implementation in Gecko is focused on the Networking/Necko code to be implemented.

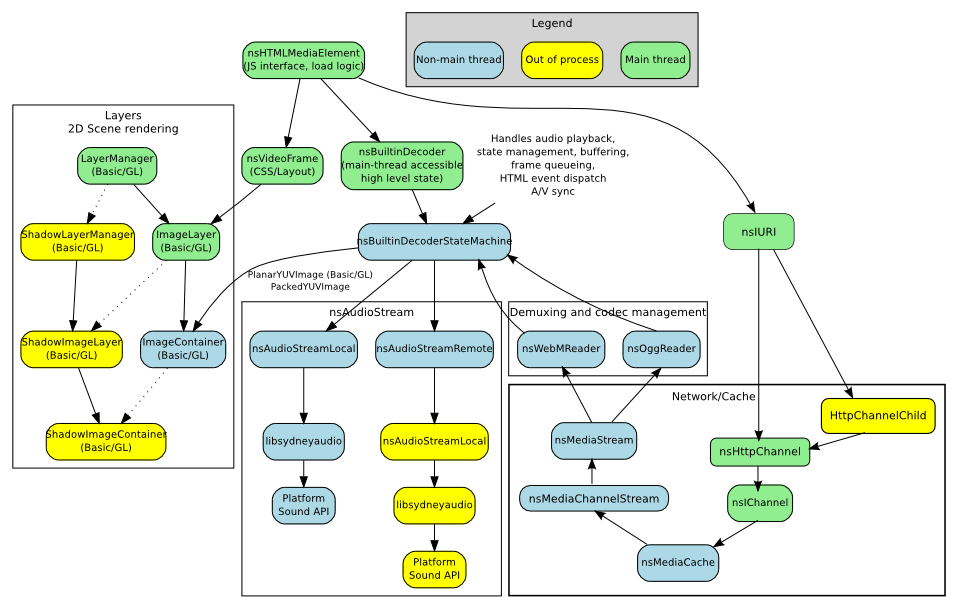

Current Design and Behavior of non-adaptive streams

With current native video, Necko buffers the data until it is safe to start playing. nsMediaChannelStream downloads data via HTTP and puts that data in an nsMediaCache. nsMediaCache in turn makes this data available to decoder threads via read() and seek() APIs. The decoders then read the data and enqueue it in A/V queues.

Chris Pearce has a blog post [here] that describes the current architecture.

Figure 1 Current Gecko Video Architecture (taken from http://pearce.org.nz/uploaded_images/video-architecture.svg with additions for Necko code).

High Level Approaches - Segment Request and Delivery to MediaStream/MediaCoder

Two initial ideas were suggested by Rob O'Callahan:

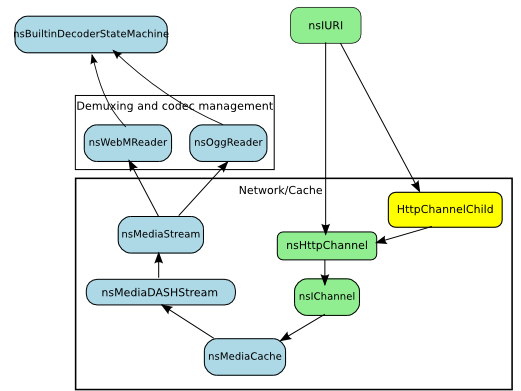

- One nsMediaDASHStream class is created which manages the monitoring of local capabilities/load and adapts download by switching streams as necessary. Then one nsMediaCache provides data to a single nsWebMDecoder.

Figure 2 - High level view of option 1: Single Audio/Video decoder.

Description needed

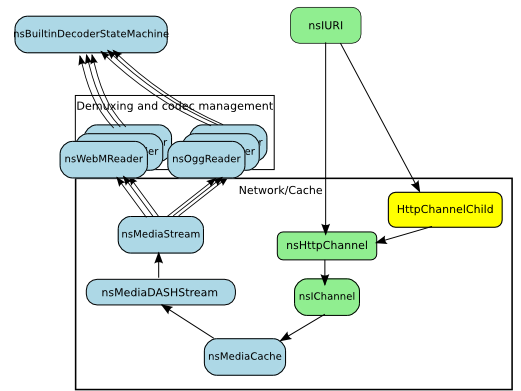

- One nsMediaDASHStream as before, but with multiple nsWebMDecoders, one for each encoded stream available on the server. Only one nsWebMDecoder would be used at a time.

Figure 3 - High level view of option 1: Multiple audio/video decoders.

Description needed

Question: Are these approaches possible? Can a single MediaCoder handle multiple streams? Can a single MediaStream handle changes in bitstream coming from DASH?

- VP8/Video

- Chris Pearce: "For VP8 basically yes. In bug 626979 (and a follow-up fix in bug 661456) we implemented support WebM's track metadata DisplayWidth/DisplayHeight elements. We scale whatever contained video frames we encounter to DisplayWidth x DisplayHeight pixels, so you can change the dimensions of video frames at will while encoding a single track."

- Tim Terriberry: "Resolution is not the same thing as bitrate, but in general yes, you can change both in VP8 without re-initializing the decoder. The one caveat is that if you do want to switch resolution, the first frame has to be a keyframe. You should also start with a keyframe when changing between streams encoded at different bitrates, or you'll get artifacts caused by prediction mis-matches."

Question: Does the DASH Media Segment definition or media encoding process require that each new segment start with a keyframe?

- Vorbis/Audio

- From Chris, Tim; paraphrased: Vorbis is more complicated because of the way it is encoded. It uses two different block sizes, 'long' and 'short'; the decision of which to use depends on what the encoder decides is best. So, if we had two streams encoded at different rates, there is no guarantee that they blocks would line up. This is important because in order to decode the first half of a block, you need the last half of the previous block - different block sizes from disparate streams won't enable this. If we were to change stream, either a pause in audio would happen (due to the decoder being flushed), or we'd have to use some kind of extrapolation (LPC extrapolation).

- To avoid this, we could require that each segment include extra packets to allow correct decoding. Or, we can just support no audio adaptation to start with (similar to Apple HLS). Rob O'Callahan is working on a Media Streams API which includes cross-fading - this may be a longer term solution, after starting with non-adaptive audio.

Use of libdash from ITEC

Code from libdash has been released under MPL2.0 by Christian Timmerer and Christopher Mueller of ITEC, Austria. The plan is to fork the codebase and integrate it with Gecko code. At a high level this involves switching the DOMParser/libxml and HTTP code in libdash for nsDOMParser and Necko's HTTP code. As of Feb 16, 2012, this is under way.

This adds extra components in the above high level diagram, namely DASHManager, MPDParser and the MPD classes, as well as the Adaptation Logic. The following sections will reflect the base design used in libdash including any changes.

DASHManager

Manages all DASH activity in Gecko, similar to nsHttpConnectionMgr. MPD and MPDParser objects are obtained here.

MPDParser

Will use nsDOMParser to read XML from the nsHTTPChannel and populate the MPD classes.

Diagram needed

Detailed Description needed

MPD Classes and Objects

As per libdash

Diagram needed

Description needed

Plan

A staged approach will be taken for implementation, with early stages as follows:

- Parse an MPD file off the network and choose a single stream for media playback. No adaptation for this first phase.

- Add a basic adaptation logic.

- (Long term) Expose JS APIs as follows:

- Buffering/request handling

- MPD handling

- Adaptation logic

- Logging, monitoring.

Please note that (4) would correspond to the DASH metrics defined in the Annex of the DASH specification. It would allow application developers to generate reports back to the content provider in order to improve Quality of Service/Experience. Also note that JS APIs may be exposed earlier; the primary goal, however, is to get adaptive video working in Gecko, and JS APIs (while very desirable) should not be blocking work in these first stages.

Diagram needed

Description needed

Capability/Load Monitoring

How will this be provided?

Media Segments

Processed as per DASH/Matroska/WebM

Diagram needed

Description needed

Related Bugs

Overall DASH Tracking bug 702122 add support for DASH (WebM)

Other bugs to be created:

- Add libdash code to mozilla-central, integrated with nsDOMParser and nsHttpChannel

- Exclude code from general build for now.

- Code should read in MPD, be able to produce debug output for MPD, and populate MPD classes

- Update codebase with bugfixes from Christopher Mueller.

- Choose stream from MPD and playback, non-adaptively

- Integrate adaptation logic