Add-ons/Expired-Certificate

Add-ons Incident Retrospective Summary

Authored by: Sheila Mooney

Overall Status

We have spoken to many of the individuals directly involved in the response as well as the teams working directly with the certificate management and add-on signing systems. We have a list of opportunities for improvement and next steps are to prioritize and find owners to drive some of these investigations and changes forward.

Problem Statement

On May 4th, 2019 at midnight UTC, the intermediate certificate used as part of the add-on signing process expired. The result was that every add-on signed using this certificate could not be loaded into Firefox. For many of our users, the internet was broken and their experience was severely impacted. Approximately 40 to 50 percent of our users have add-ons. Eric Rescorla published a blog post shortly following the incident summarizing in more detail what happened.

Response & Timeline

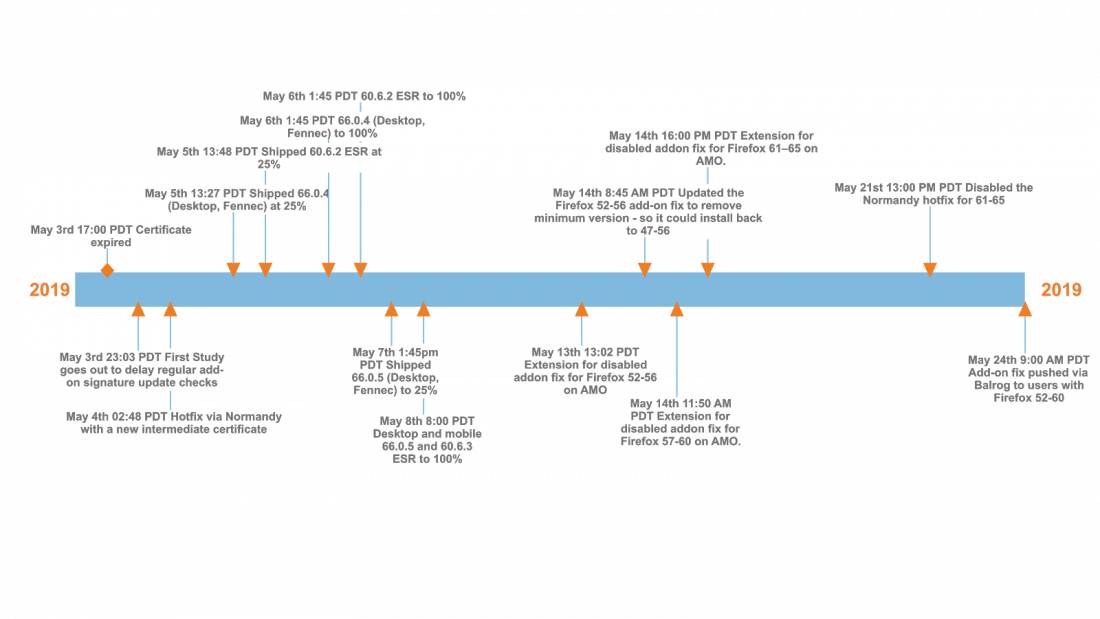

Our users reported the issue on Twitter, Reddit and other social channels. We mobilized our incident response, tweeted to let our users know we were aware of the issue and worked on solutions for the problem. Approximately 6 hours post-incident we published a fix via a Normandy study to delay regular add-on signature update checks. Within 9 hours of the issue, we shipped a new certificate, also via Normandy, with an updated validity window. Dot releases for both Desktop, Fennec and ESR were released within 48 hrs and a number of follow-on fixes went out to support users on older versions of Firefox. Our last fix was deployed on May 24th. A timeline of remediations can be seen below.

Post Mortem

Hundreds of staff and volunteers came together to respond to the incident. We could not interview all of them but we did speak to over 30 individuals to understand how this happened, how we responded and what we can improve going forward.

Root Cause

This situation wasn’t as simple as “we let a certificate expire before it could be renewed”. We were aware of the expiry date when the certificate was issued 2 years ago. Peter Saint-Andre and Matthew Miller put together a more detailed technical report on the root cause. Next steps for helping protect us against this in the future involve a more detailed review of how we handle add-on security in general.

Opportunities for Improvement

One of the most important aspects of a post mortem are the learnings and opportunities for improvement and discussion. The Firefox organization will be working through the following list of improvements and spinning up projects to investigate further and drive them forward.

| Opportunity | Next Steps |

|---|---|

Tuning our Incident Command Process by looking at some of the following areas to create a more structured playbook:

|

Work has already started on this. We are capturing some improvements. Next steps are to review what we have and identify gaps. |

A full map of all the various deployment channels we can use to ship code to users for all our products

|

Confusion around our deployment channels surfaced in many of the post mortem discussions. Next steps are to find a lead and team to tackle this project. Pieces of this are underway already. |

| Investigate a plan for deploying a “real” hotfix to everybody (big red button) in a similar emergency. We had to deploy multiple dot releases and other remediations just to get everybody running again. | Identify a team of people to talk about a strategy and approach. Some discussions happening already but we need to craft a formal project around them. |

| As we attempted to deploy remediations we kept running into a number of bugs and edge cases to handle. We recommend that we map out a full failure cascade in detail to understand if there are improvements we can make for the future. | Talk to QA/Relman about an owner for this exercise. |

| Detailed audit of anything with an expiry date so we understand what other time bombs might be out there. Bug logged. | We have logged a bug already. Next steps are to find an owner. |

| Plan for the certificate that will expire in 2025. Bug logged. | We have logged a bug already. Next steps are to find an owner. |

| Now that we understand the importance of add-on support for mobile (ratings plummeted during the incident), we might want to reconsider that strategy/priority for Fenix. | Product discussion and decision to make here. |

| Solution for QA support on the weekend and during an incident of this level. This can be broader and cover how we handle incidents outside of the more normal business hours. | Raised with Engineering Leadership. Look at next steps for prioritizing. |

|

Detailed post mortem to look at events and decisions that caused us to delete a week’s worth of Telemetry data for all Desktop users. Investigate some broadcast mechanism in our product so we can let our users know that we are aware of an issue. We used many channels, responded to threads directly. This was time consuming. |

To be scheduled post Whistler all-hands. Needs to be prioritized in the context of other investigations. |

Deeper investigation into the systems involved for handling add-ons and security.

|

Project is underway to investigate this further. Will likely break out into multiple sub projects. |

| Explore diversity in the configurations that we run in continuous integration. | Working with Engineering to determine if there is a project here that should be prioritized. |

Conclusion and Next Steps

In addition to distributing this document internally, we will be working on a public blog post talking about improvements we want to put in place so this doesn’t happen again. The Firefox leadership team will be tackling the opportunities for improvement and looking to spin up a number of projects in the coming weeks and months.