Audio Data API 1

Defining an Enhanced API for Audio (Draft Recommendation)

Abstract

The HTML5 specification introduces the <audio> and <video> media elements, and with them the opportunity to dramatically change the way we integrate media on the web. The current HTML5 media API provides ways to play and get limited information about audio and video, but gives no way to programatically access or create such media. We present a new extension to this API, which allows web developers to read and write raw audio data.

Authors

Other Contributors

- Thomas Saunders

- Ted Mielczarek

- Felipe Gomes

- Ricard Marxer (@ricardmp)

Status

This is a work in progress. This document reflects the current thinking of its authors, and is not an official specification. The goal of this specification is to experiment with audio data on the way to creating a more stable recommendation. It is hoped that this work, and the ideas it generates, will eventually find its way into Mozilla and other HTML5 compatible browsers.

The continuing work on this specification and API can be tracked here, and in Mozilla bug 490705. Comments, feedback, and collaboration are all welcome.

API Tutorial

We have developed a proof of concept, experimental build of Firefox (see below) which extends the HTMLMediaElement (e.g., affecting <video> and <audio>) and implements the following basic API for reading and writing raw audio data:

Reading Audio

Audio data is made available via an event-based API. As the audio is played, and therefore decoded, each frame is passed to content scripts for processing before being written to the audio layer. Playing, pausing, and stopping the audio all affect the streaming of this raw audio data as well.

onaudiowritten="callback(event);"

<audio src="song.ogg" onaudiowritten="audioWritten(event);"></audio>

mozFrameBuffer

var samples;

function audioWritten(event) {

samples = event.mozFrameBuffer;

// sample data is obtained using samples.item(n)

}

Getting FFT Spectrum

Most data visualizations or other uses of raw audio data begin by calculating a FFT. A pre-calculated FFT is available for each frame of audio decoded.

mozSpectrum

var spectrum;

function audioWritten(event) {

spectrum = event.mozSpectrum;

// spectrum data is obtained using spectrum.item(n)

}

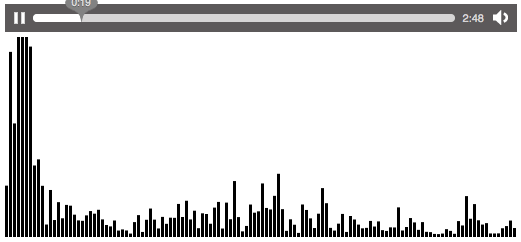

Complete Example: Reading and Displaying FFT Spectrum

This example uses the native FFT data from mozSpectrum to display the frequency spectrum in a canvas:

<!DOCTYPE html>

<html>

<head>

<title>JavaScript Spectrum Example</title>

</head>

<body>

<audio src="song.ogg"

controls="true"

onaudiowritten="audioWritten(event);"

style="width: 512px;">

</audio>

<div><canvas id="fft" width="512" height="200"></canvas></div>

<script>

var spectrum;

var canvas = document.getElementById('fft');

var ctx = canvas.getContext('2d');

function audioWritten(event) {

spectrum = event.mozSpectrum;

var specSize = spectrum.length, magnitude;

// Clear the canvas before drawing spectrum

ctx.clearRect(0,0, canvas.width, canvas.height);

for ( var i = 0; i < specSize; i++ ) {

magnitude = spectrum.item(i) * 4000; // multiply spectrum by a zoom value

// Draw rectangle bars for each frequency bin

ctx.fillRect(i * 4, canvas.height, 3, -magnitude);

}

}

</script>

</body>

</html>

Writing Audio

It is also possible to setup an audio element for raw writing from script (i.e., without a src attribute). Content scripts can specify the audio stream's characteristics, then write audio frames using the following methods.

mozSetup(channels, sampleRate, volume)

var audioOutput = new Audio(); audioOutput.mozSetup(2, 44100, 1);

mozWriteAudio(length, buffer)

var samples = [0.242, 0.127, 0.0, -0.058, -0.242, ...]; var buffered = audioOutput.mozWriteAudio(samples.length, samples);

Note: To copy the input samples of one audio stream directly to another audio output, you will need to convert event.samples array to a native JavaScript array like so:

var output;

function audioWritten(event){

samples = event.mozFrameBuffer;

outputSamples = [];

for(var i=0;i < samples.length; i++){

outputSamples[i] = samples.item(i);

}

// outputSamples[] is now ready for writing to other audio element

}

Complete Example: Creating a Web Based Tone Generator

This example creates a simple tone generator, and plays the resulting tone.

<!DOCTYPE html>

<html>

<head>

<title>JavaScript Audio Write Example</title>

</head>

<body>

<input type="text" size="4" id="freq" value="440"><label for="hz">Hz</label>

<button onclick="generateWaveform()">set</button>

<button onclick="start()">play</button>

<button onclick="stop()">stop</button>

<script type="text/javascript">

var sampledata = [];

var freq = 440;

var interval = -1;

var audio;

function writeData() {

var n = Math.ceil(freq / 100);

for(var i=0;i<n;i++)

audio.mozWriteAudio(sampledata.length, sampledata);

}

function start() {

audio = new Audio();

audio.mozSetup(1, 44100, 1);

interval = setInterval(writeData, 10);

}

function stop() {

if (interval != -1) {

clearInterval(interval);

interval = -1;

}

}

function generateWaveform() {

freq = parseFloat(document.getElementById("freq").value);

// we're playing at 44.1kHz, so figure out how many samples

// will give us one full period

var samples = 44100 / freq;

sampledata = Array(Math.round(samples));

for (var i=0; i<sampledata.length; i++) {

sampledata[i] = Math.sin(2*Math.PI * (i / sampledata.length));

}

}

generateWaveform();

</script>

</body>

</html>

DOM Implementation

nsIDOMAudioData

Audio data (raw and spectrum) is currently returned in a pseudo-array named nsIDOMAudioData. In future this will be changed to use the much faster native WebGL Array.

interface nsIDOMAudioData : nsISupports

{

readonly attribute unsigned long length;

float item(in unsigned long index);

};

The length attribute indicates the number of elements of data returned.

The item() method provides a getter for audio sample data (e.g., floats).

nsIDOMNotifyAudioWrittenEvent

Audio data is made available via the following event:

- Event: AudioWrittenEvent

- Event handler: onaudiowritten

The AudioWrittenEvent is defined as follows:

interface nsIDOMNotifyAudioWrittenEvent : nsIDOMEvent

{

readonly attribute nsIDOMAudioData mozFrameBuffer;

readonly attribute nsIDOMAudioData mozSpectrum;

};

The mozFrameBuffer attribute contains the raw audio data (float values) obtained from decoding a single frame of audio. This is of the form [left, right, left, right, ...]. All audio frames are normalized to a length of 4096 or greater, where shorter frames are padded with 0 (zero).

The mozSpectrum attribute contains a pre-calculated FFT for this frame of audio data. It is calculated using the first 4096 float values in the current audio frame only, which may include zeros used to pad the buffer. It is always 1024 elements in length.

nsIDOMHTMLMediaElement additions

Audio write access is achieved by adding two new methods to the HTML media element:

void mozSetup(in long channels, in long rate, in float volume); void mozWriteAudio(in long count, [array, size_is(count)] in float valueArray);

The mozSetup() method allows an <audio> or <video> element to be setup for writing from script. This method must be called before mozWriteAudio can be called, since an audio stream has to be created for the media element. It takes three arguments:

- channels - the number of audio channels (e.g., 2)

- rate - the audio's sample rate (e.g., 44100 samples per second)

- volume - the initial volume to use (e.g., 1.0)

The choices made for channel and rate are significant, because they determine the frame size you must use when passing data to mozWriteAudio().

The mozWriteAudio() method can be called after mozSetup(). It allows a frame of audio (or multiple frames, but whole frames) to be written directly from script. It takes two arguments:

- count - the number of elements in this frame (e.g., 4096)

- valueArray - an array of floats, which represent a complete frame of audio (or multiple frames, but whole frames).

Both mozWriteAudio() and mozSetup() will throw exceptions if called out of order, or if audio frame sizes do not match.

Additional Resources

A series of blog posts document the evolution and implementation of this API: http://vocamus.net/dave/?cat=25. Another overview by Al MacDonald is available here.

Obtaining Code and Builds

A patch is available in the bug, if you would like to experiment with this API. We have also created builds you can download and run locally:

NOTE: the API and implementation are changing rapidly. We aren't able to post builds as quickly as we'd like, but will put them here as changes mature.

- Mac OS X 10.6

- Mac OS X 10.5

- Windows 32-bit

- Linux 32-bit

- Linux 32-bit - Build 11e

- Linux 32-bit - Build 12 (Uses new WebGL Float Arrays. Examples need to be updated.)

A version of Firefox combining Multi-Touch screen input from Felipe Gomes and audio data access from David Humphrey can be downloaded here.

JavaScript Audio Libraries

- We have started work on a JavaScript library to make building audio web apps easier. Details are here.

- dynamicaudio.js - An interface for writing audio with a Flash fall back for older browsers.

Working Audio Data Demos

A number of working demos have been created, including:

- FFT visualization (calculated with js)

- FFT visualization (calculated with C++ - mozSpectrum)

- http://bocoup.com/core/code/firefox-fft/audio-f1lt3r.html (video here)

- http://www.storiesinflight.com/jsfft/visualizer/index.html (Demo by Thomas Sturm)

- http://blog.nihilogic.dk/2010/04/html5-audio-visualizations.html (Demo and API by Jacob Seidelin -- video here)

- http://ondras.zarovi.cz/demos/audio/

- Beat Detection (also showing use of WebGL for 3D visualizations)

- Visualizing sound using the video element

- Writing Audio from JavaScript, Digital Signal Processing

- Simple Tone Generator http://mavra.perilith.com/~luser/test3.html

- Playing Scales http://bocoup.com/core/code/firefox-audio/html-sings/audio-out-music-gen-f1lt3r.html (video here)

- Square Wave Generation http://weare.buildingsky.net/processing/dsp.js/examples/squarewave.html

- Random Noise Generation http://weare.buildingsky.net/processing/dsp.js/examples/nowave.html

- JS Multi-Oscillator Synthesizer http://weare.buildingsky.net/processing/dsp.js/examples/synthesizer.html (video here)

- JS IIR Filter http://weare.buildingsky.net/processing/dsp.js/examples/filter.html (video here)

- Csound shaker instrument ported to JavaScript via Processing.js http://scotland.proximity.on.ca/dxr/tmp/audio/shaker/

- API Example: Inverted Waveform Cancellation

- API Example: Stereo Splitting and Panning

- API Example: Mid-Side Microphone Decoder

- API Example: Ambient Extraction Mixer

- Biquad filter http://www.ricardmarxer.com/audioapi/biquad/ (demo by Ricard Marxer)

- Interactive Audio Application, Bloom http://code.bocoup.com/bloop/color/bloop.html (video here and here)