Drumbeat/p2pu/Assessment and Accreditation/Webcraft Assessments - detailed/Answer questions

Background

This skill has come up repeatedly as being a key metric of a quality web developer. In some situations, such as the 2010 SXSW session for the P2PU School of Webcraft, people who emerged as particularly skilled in helping other people achieve their goals by being both proficient and timely in their answers gained special recognition from the community.

Good at answering other people's questions

Breaking down this skill into component parts, we get:

- Response time (or timeliness) – working habits, etc.

- Completeness – understanding the audience.

- Clarity – writing skill.

- Accuracy – knowledge.

- Tone – empathy and communities of practice.

- Others?

Each of these is subject to some suite of metrics and some level of analysis

In turn:

- Response time:

- This metric provides evidence of the speed with which someone is able to understand, consider, and then respond to queries related to web development. It can also provide some insight int the working habits of a web developer. It may also be a surrogate measure of some form of empathy for other people, or at least an orientation to helping others succeed even if the personal payoff is indirect.

- This metric requires some knowledge of how the person plans to operate as a resource. For example, some people will simply be plugged into a chat room or equivalent and will glance at it on occasion (or get an alert) during the course of their regular work, taking a few moments when a relevant question arises to pause and give an answer. Other people may choose to check in on questions (e.g., via email) on some regular basis, answering all of the questions at that time. There are other ways of organizing this effort as well. The point is that you cannot simply compare two people who have different ways of organizing their time. If someone is seeking to prove that s/he excels at answering questions in a timely manner, the "timeliness" depends on our expectations. For one person, that could mean minutes of lag time, whereas for another person it could mean within 24 hours, or longer.

- Given the above, this is a good example of a metric that requires some sort of opt-in process if we are to be able to score it meaningfully. I am imagining a sort of contract with the following fields:

- Name or ID

- Confirmation of desire to be evaluated for timeliness (check box).

- Brief description of how they plan to respond to questions:

- As they arrive (ASAP).

- At some regular interval (less than 24 hours).

- Daily.

- Weekly.

- How will you handle questions you cannot or will not answer?

- Delegate appropriately.

- Flag for others (shared question pool).

- Any other relevant info?

- This metric, once the information above has been provided, is fully automated. We simply need to generate a datasheet each day (or longer interval, if appropriate) which captures the lag time between the posting of the question and the posting of the answer. For ASAP folks, the actual time intervals will serve as the scores. For people answering questions on some regular interval, the scores can be converted to 0s and 1s according to whether each question was actually answered in the specified time interval.

- Based on conversations on 8 July 2010 - The simplest metric here is just total number of comments, which can be captured for everyone and displayed om their portfolio page. This will happen automatically. For participants who want more refined data, they will need to provide the P2PU system with some additional information about themselves and how they would like to be evaluated (see above). Once those data have been provided, then the system should be able to manage the rest of it automatically, again publishing the data on the individual's portfolio page. The individual can choose to display those data publicly or not.

- Completeness:

- This metric provides evidence that the person answering questions sufficiently understands what the questioner might need to act upon the answer to good effect. For example, an expert with a question would probably be satisfied with a high-level and targeted response where most of the intermediate steps were omitted. In contrast, a novice might just get more lost and confused without fairly explicit directions that start from the beginning.

- One way of gathering semi-automated information on this metric would be to see how many follow-up questions are required following an initial inquiry. Presumably the more follow-up questions there are, the less complete was the original answer. There are a number of possible sources of error in this method though, since the need for follow-up questions is just as likely to be the fault of the original questioner.

- A better way to score this metric would be to just ask the original questioner if the answer(s) received was complete. As part of the opt-in contract (see "response time," above), the participant can simply indicate that they would like their answers to be evaluated in this manner.

- Ideally, the question/answer system is open to the world, which makes it possible for anyone to provide feedback on the responses. See section 3 below to see how this would work.

- Clarity:

- This metric is illustrative of the writing skill of the participant. Even if an answer is incomplete, or not timely, it is possible for it to be exceptionally clear. Clarity of writing is reflective of both general writing skills as well as an understanding of how to relate to a specific audience through appropriate word-choices (avoidance or use of lingo, as appropriate) and the structure of the response(s).

- Like "completeness," above, this metric is probably best scored by direct feedback from the original questioner as well as the community at large. See section 3 below for the suggested implementation.

- Accuracy:

- This metric reflects the core knowledge of the participant. Accuracy can involve both the avoidance of overt errors as well as the identification of "best" solutions (where there might be more than one way to solve a problem). For example, there might be a 12-step method for solving a problem, all of which is technically accurate, but it would better to get the 2-step method instead if it exists.

- This metric is probably best evaluated by the field. Clearly, if the wrong advice is given, we should be able to capture that information. Getting something wrong isn't necessarily horrible as long as the participant learns from the process and improves over time.

- In addition to the general feedback schema outlined in section 3, below, this metric would benefit from a self-appointed panel of peer evaluators who meet basic expertise criteria and are interested in fostering the educational development of web developers. This panel, loosely defined, could analyze some subset of "beefy" answers for their accuracy, assigning scores according to a published rubric. I am imagining some sort of threshold rubric, whereby people fall into certain categories of expertise and capability according to their overall scores. This avoids the need to try to be perfect and allows the broader community to determine reasonable accuracy levels for their peers. The panel could be recruited and retained via a system like {paid online mini-tasks}. Over time, I imagine that this expert pool will be part of the P2PU alumni community and they will see it as part of their community service and ongoing engagement to be involved in this manner. We have similar ideas for evaluating portfolio items and such, and there are possible mash-ups with Aardvark, CPR, and other social networking and assessment tools.

- Tone:

- This metric is an underappreciated aspect of being a good communicator, especially via inherently atonal modes of communication such as email. Often the very best answers will be derailed by their inappropriate (usually snotty and elitist) tone. Developing a sense of nuance around tonality is a key skill.

- Tone is a subjective measure, so just as with "completeness" and "clarity," it is best measured by feedback from the original questioner as well as the community at large. See section 3 below for a suggested implementation.

We also considered things in reverse – imagine that someone has answered a question, what can you do with that answer?

- You can analyze it as an academic object (e.g., use a rubric to rate the answer for the qualities above).

- See section 3 below for one idea of what a rubric would entail. The same basic rubric should apply for both crowd-sourced and expert-based evaluations. I suspect that we may actually want to have both methods operating at the same time, both to mutually validate each other as well as to offer the opportunity for participants to obtain competency metrics that are of most value to them. Since it is more expensive (in time and/or organizational effort) to bring experts (individually or as part of a panel) into the mix, this might be a paid option.

- You can simply ask the questioner if the answer was useful.

- See above and below (section 3) for more on this.

- We might also consider recruiting people to ask questions of a particular type and difficulty as a way of ensuring that P2PU participants who are seeking deeper levels of skills assessment actually get the materials and engagement they need. Thus far, P2PU has been mostly oriented around learners learning from each other and building knowledge as peers, but there is also enormous opportunity for people who mostly identify as teachers to loosely collaborate around teaching tasks which would otherwise be burdensome to do alone. Something to consider.

- Others?

One possible way to gather this information at scale is to turn on commenting features for the comments themselves.

Think of the annotations on Amazon.com reviews and similar sites. The key would be to implement comments as a staged exercise, subject to our beliefs about what is of general interest as well as the level to which participants have opted in to be reviewed.

- The first stage would be one or more commenting features which are available by default. For example: Is this answer helpful? (Y/N)

- This question is very easy to answer, and the aggregate of people's responses easily allows sorting of comments/answers according to helpfulness. That has immediate payoff for the users of P2PU who are browsing comments or answers. There may be other lightweight community-oriented queries which would be worth including as well, such as a star-rating system.

- The resulting data can be rendered in raw form (e.g., % of total answers which were considered mostly helpful) or in comparative form (e.g., relative rank of helpfulness as compared to others answering questions, subject to some baseline comparability criteria).

- The second stage would remain hidden until someone responds to the first-stage question, and even then it would remain hidden unless the person who wrote the answer/comment wanted to have the more detailed evaluations performed on his/her work. For example, if I have opted in to being evaluated on all of the metrics described above, the P2PU system would embed some computer annotation in the system which would specially mark any answers I gave (presumably I have to log in to post an answer or comment to a query). This mark could either be hidden or visible, depending on how we think it might motivate behavior. A visible mark would signal to people that the person is seeking additional feedback and could motivate them to participate. But a hidden mark would make it more likely that people are engaging initially because they were motivated by the quality of the question or the subject matter and might therefore be more authentic. Either way is likely to be fine, and perhaps that is a choice we can give as part of the opt-in form.

- Based on conversations held 8 July 2010 - We decided that it makes more sense for all of these commenting fields to be available by default, but then route the data to an anonymized aggregate as well as each person's individual portfolio page. The person can then choose to make those data public or not. See section on technical details below for further info.

- Depending on which categories I chose to be evaluated on, a new dialog (or however we want to code it) would pop up once someone rates my answer. This new dialog might ask: How was this answer useful (or how not)? Please consider the following specific categories (from above): completeness, clarity, accuracy, tone, etc.

- In each case, we will probably want to use a Likert scale (e.g., 1. Very clear, 2. Clear enough, 3. Somewhat clear. 4. Not so clear. 5. Totally confusing.). If possible, we may also want to provide a text box for additional comments. It is hard to systematically deal with freeform comments, but the feedback is likely to be quite valuable to the person being evaluated and is in keeping with P2PU's general educational mission.

- The people making these ratings are themselves subject to qualification, if we want. For example, some members of the P2PU community might indicate specific expertise or interest in the broader educational mission and claim certain status as a result. We would need some method of independently verifying such claims, at least to start. Over time, I think our goal should be for the alumni community to be sufficiently large and well described such that we already know everything we need to know about the different qualifications and claims people make about themselves. This feeds directly into an Aardvark-like system for connecting participants with appropriate peers for evaluation and feedback.

Some mock-ups to consider

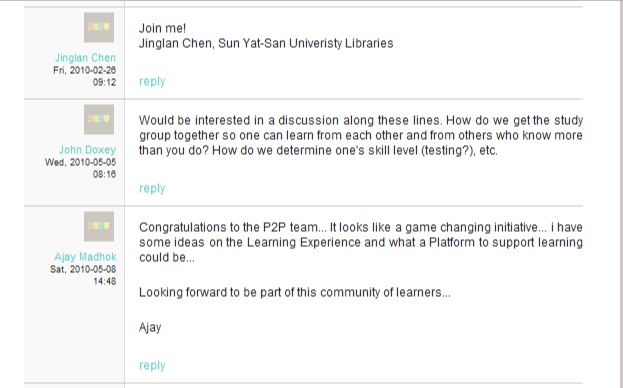

The first image below is a simple screenshot of the current P2PU comments section. It is taken from the P2PU lounge, so the comments are nothing special, but ignore the text of the comments for the sake of the mock-up.

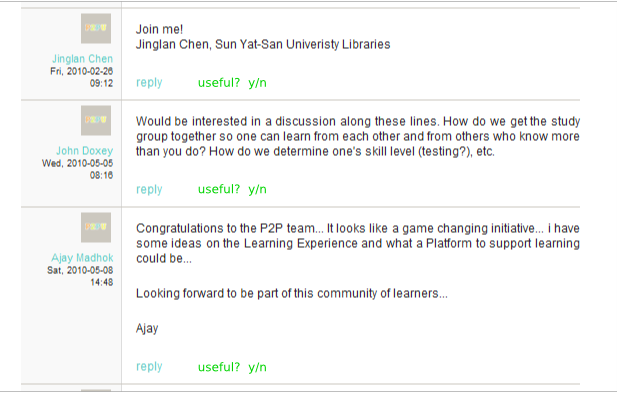

The second image below shows the same screenshot but with the community feedback feature enabled. In this case, it is a simple "Useful? (y/n)" query. Presumably people will be able to simply click on the "Y" or the "N" and the system would track who chose what and ensure that people do not vote twice on the same comment.

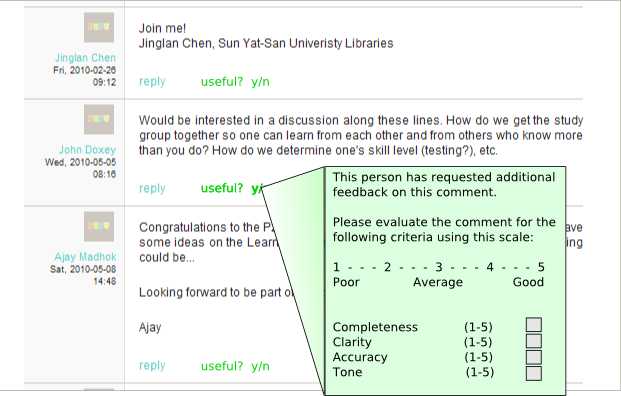

The third image below shows the optional pop-up dialog that asks for additional information. As detailed in Section 3, above, this dialog would be hidden unless two things were true: 1) the person who wrote the original comment opted in to having it appear, and 2) someone was motivated to indicate whether the comment is useful or not. The dialog itself could be dynamic in the sense that it may only contain those specific follow-up queries and metrics which are of interest to the commenter. The terms would all need to be links to explanatory pages, and the scale may need to be fleshed out a bit. But in essence, this is all that would be necessary to have a system that can capture peer feedback at scale for various attributes of question-answering abilities.

Further technical details

This version - 12 July 2010

- The default for answer commentary should be "on."

- The data should flow to two places:

- The commenter's portfolio page, where the data are only visible to that person when they are logged in and to no one else (default).

- An aggregated P2PU data view, which can show standard stats (#s of comments, average scores (and median, mode, range) for comment characteristics, etc.

- The portfolio data can be made public by choice. Only publicly rendered data will benefit from the networked characteristics of the system. For example, for any given answer, there will be some number of comments/ratings of that answer by the community. Everyone can see the scores (anonymized and aggregated), but people can also view individual scores and comments only for those commenters who have opted to have their contributions be publicly viewable.

- The benefit of making comments publicly viewable (besides enhancing the value of the comments for the ecosystem) is that it allows people to publicly display competency scores for their answers and the comments on their answers.