Necko/MobileCache

How we're using this wiki page

We're using this wiki page to organize and prioritize our necko work on mobile HTTP disk caching. We're keeping track of both TODO lists, and general issues/theories about what's going on with the cache and how to improve it.

Feel free to add comments in this wiki: put your name on them so we can followup. I'll try to scrub obsolete comments once they're resolved (but feel free to do that yourself, too): jduell.

Also see Cache Architecture.

Theories about why the mobile HTTP disk cache is slow

We have a number of theories about why the cache is slow on mobile: (feel free to add more theories)

- Cache misses are expensive (because we hit the disk to check before launching network request).

- We use too many files on disk, and would be better off with a few files (or just one)

- We should be using mmap instead of read() and write()

Collecting data to figure out why HTTP disk cache is slow on fennec

So far we only have aggregate data (tp4 runs) that shows the cache is often a perf loss on mobile - see Necko/MobileCache#Effect_of_Cache_Size.

- bjarne: note that this test is limited in that it cyclically loads a limited pageset, and it keeps the memory-cache at 1Mb. Effectively it says "When loading a relatively small pageset over-and-over again on mobile, a 1Mb memory-cache is often more efficient than a disk-cache." Given that the cache-service prefers using a disk-cache over a memory-cache, and the memory-cache uses a more sophisticated replacement-algorithm than the disk-cache (see bug 648605 and bug 693255) this is not surprising. To be more conclusive we need results from running this test with the memory-cache turned off.

We have run some tests with a slightly modified tp4m to better test cache performance. See Necko/MobileCache/Tp4m for more details.

We need much more detailed information about what's going on. So we need to write and run some microbenchmarks that will test our current theories and quantify what (if any) performance cost they may have. For further details please visit Necko/MobileCache/MicroBenchmarks.

At some point we're also going to want to have not just microbenchmarks, but also tests that run against a "realistic browsing history", so we can see if various code improvements actually help things, and by how much. We should start building that infrastructure now.

- jduell: I propose we write this as an xpcshell script that can take a history of channel loads and re-run them, including dependency information (i.e. "don't load channel X,Y,Z until channel A completes"). I think Byron wrote the beginnings of such a script when he was here--I'll look for it.

TODO list

Right now this TODO list is mostly focused on getting more info about what's going on, since it'll be hard to make progress until we know why we're slow.

- Bjarne/Michal: continue work on scripts to get performance data (see Necko/MobileCache/MicroBenchmarks)

- Figure out a way to turn disk caching on/off for xpcshell tests (I believe an env variable already exists: $NECKO_DEV_ENABLE_DISK_CACHE). If need be, revisit bug 584283.

- If cache miss takes longer on mobile when disk cache on, presumably it's from the disk IO we do to check for entry, which delays network request. Measure to see how much that's true.

- mobile-team: run the above tests and give us the perf numbers for 3G and wifi latencies/bandwidth.

- Bjarne/Michal: Create a plugin that records browsing history, so we can get some set of users (mobile/necko teams, others) to use it and have a set of "representative" (or at least realistic) browsing histories.

- record dependencies (which channel causes other channels to be loaded), so we can write xpcshell tests that simulate document load and subchannels.

- just a matter of recording the referrer? And/or marking if something is a document load.

- record dependencies (which channel causes other channels to be loaded), so we can write xpcshell tests that simulate document load and subchannels.

- Bjarne/Michal: Put up a wiki page with an outline of how the cache works (something like the sketch we drew during the All-Hands).

- What's in memory, what's on disk, what's the format, what gets touched when.

- Start with channel load, and cache hit/miss cases (including storing data into cache)

- What operations we perform on these data structures (cache hit/miss, eviction, shrink/grow cache, etc.) and their performance (both big-O, and file access pattern).

- What's in memory, what's on disk, what's the format, what gets touched when.

- Someone (mobile team?): Write a microbenchmark that measures effect of having large versus small numbers of files in a directory tree on system calls like open/read/write.

- ideally measure both stdio read()/write(), and the NSPR versions we actually use.

- Someone (mobile team?): Write a microbenchmark that compares I/O performance for mmap vs read/write calls.

- I suspect it'd be a lot work to switch our cache to using mmap, so we'd need to have concrete data to motivate that.

- Ideally we'd measure perf across various read/write sizes and access patterns (in particular: does mmap or read peform better for serial accesses?)

Performance improvements

We've got ideas about improving the cache. None of them is validated by data yet. I'm listing them so we keep track of them (and what we'd need to have to validate that they're worth doing).

Some of these are incremental changes: some of them would require major changes, possibly including a re-write of the cache and it's current data structures.

- If I/O hit to check disk for cache hit is too high, can we page in channels (or at least meta-data for them) for a domain (and any sub-domains we suspect from history that they might load, ala DNS subdomain prefetching)? Page into memory cache with some "evict me first" flag? The main idea here is to possibly skip IO for cache check for first load of www.cnn.com, but by the time its index.html is returned, we have an in-memory structure that lets us check for cache hits of page item subloads (images, css, etc) w/o hitting the disk.

- If disk latency is a big problem but bandwidth is ok, only store larger items in disk cache, and smaller ones in RAM cache?

- Only cache items that don't require revalidation? (on theory that with a high latency network, most revalidates don't save much time).

- Various ideas to improve hit rate of cache (both memory and disk caches) given small cache size

- Compress currently uncompress items (bug 648429)

- Use a smarter scheme than plain LRU for disk cache (bug 648605)

- Only store certain file types

- See if lowering max cache item size for memory cache improves perf.

- Store cache using a single (mmapped?) file or small number of files

- simpler version of this might be to have BLOCK files for larger file sizes (bug 593198)

Questions for guidance

These questions are intended to guide us in our goal of being able to turn on the disk cache (in some form) on mobile. This is not necessarily an exhaustive list, but it's a starting point so we don't necessarily go off the rails with ideas that may be more complex than we absolutely need. Bigger, better improvements to the cache as a whole can (and hopefully will!) come after mobile has anything at all.

The questions that are in strikeout are no longer relevant. With a decent-sized cache, we no longer slow down Tp. This holds true on both internal and sdcard storage. There is, however, a difference in the win based on the filesystem (Samsung's RFS cuts our win by about 50% over other devices, though it's still a win).

- What happens if we just turn on existing cache (now that all I/O is on separate thread)?

- If this doesn’t slow down Tp, is there anything else preventing us from just turning it on and being done with the goal?

- How much space do we want the disk cache to grow to?

- What is it that is making us slow down Tp?

- Writing to cache?

- Because I/O tee is synchronous and eMMC is slow on writes?

- Because writing blocks readers from doing their own I/O?

- Can we move writing to its own separate thread?

- Would this work with the OS I/O scheduler (ie, would the OS not screw us anyway)?

- Can we move writing to its own separate thread?

- Does mmap help?

- Reading from cache?

- Is that a characteristic of eMMC?

- Are we just doing something stupid?

- Bad access pattern?

- Can this be solved using more block files?

- Does mmap help?

- Our algorithm?

- What are the pain points (high O notation) in our read/write/search/evict ops?

- Are any of these simple to fix resulting in a win?

- Can we change them w/o a full rewrite?

- Is there some modification to the eviction algorithm we can make? (LRU-SP?)

- Searching for cache entry before hitting net?

- How much time are we spending searching before we hit the net?

- Is this number wildly different from on HDD/SSD?

- If so, why?! This should be mostly in-memory work!

- Indicates that actual I/O is our problem, see above questions

- What are the pain points (high O notation) in our read/write/search/evict ops?

- Writing to cache?

- Questions about certain things that may be needed to answer the above

- Is OS-level I/O single threaded?

- Motivated by bug 687367

- This prevents any solution w/multiple I/O threads from working

- What are the perf characteristics of read/write/seek on eMMC/SDCard/HDD/SSD?

- Want these characteristics in similar situations to our cache

- R/W/S random in large file (simulate block files)

- R/W standalone files in possibly large directories

- Searching large directory for a file (simulate finding entry in separate file)

- Want these characteristics in similar situations to our cache

- What percentage of the time do we spend doing I/O versus in-memory cache operations

- HDD

- SSD

- eMMC

- SDCard

- Are there any statistically significant differences in these?

- Is there some threshold of device on which turning on the disk cache NEVER makes sense in its current form (or in the form we settle on for the first round)

- Devices w/crappy eMMC parts

- Devices w/crappy filesystems (samsung...)

- Do we get notifications before being force-closed (or at least before going into the background)?

- Feasable to write out cache map before force-closing so we don’t trash cache on startup?

- If we can’t write cache map, what is easiest way to not blow away cache?

- Journaling?

- Flush cache map on a timer and accept some inconsistencies from later page loads?

- Is OS-level I/O single threaded?

- What if the profile/cache is on sd card?

- How often are we on sd card instead of internal storage?

- Does this slow us way, way down?

- Should we just disable in this case?

- If we keep it enabled on sd card, what about security of cache data?

- Can we keep cache on internal storage no matter what?

- What if the profile/cache is on sd card?

- Have seen it’s a win on internal storage w/decent cache size (on a good fs)

- Bug 645848

- Will probably have to blacklist devices based on fs at runtime

- Smaller cache does not appear to be a win? (Likely too much churn in cache)

- Is Tp good enough for testing this? Bug 661900 indicates it isn't (Geoff Brown made some modifications to make it better)

- Have seen it’s a win on internal storage w/decent cache size (on a good fs)

- What size disk caches do other mobile browsers use?

- Android Browser - Appears to be 6MB according to the source code mirrored at https://github.com/android/platform_frameworks_base/blob/master/core/java/android/webkit/CacheManager.java#L60

- On a rooted Froyo phone, you can see the cache is stored at /dbdata/databases/com.android.browser/cache/webviewCache. Experiments confirm the 6MB capacity. Each entry is stored as received from the network, in its own file (there are no block files). The maximum entry size is about 2MB (larger files are not stored).

- Chrome Mobile (especially interesting given Chrome's similar disk cache implementation) - Can't find anything on this (yet), hopefully will get easier once ICS is released and we can (hopefully) see the config on a running mobile chrome.

- Opera Mobile (not the crappy "download pre-rendered pages" version) - 10000K according to opera:config (this is 1/2 what the desktop version has by default). Note that Opera also has a separate media cache which defaults to 10x the regular disk cache size.

- Safari Mobile (not a direct competitor, but maybe useful data point since it's a rather common mobile browser) - The internet says it doesn't have one at all

- Dolphin HD (another popular android browser) - The internet conflicts, but it appears to be either 20MB or 40MB

- Android Browser - Appears to be 6MB according to the source code mirrored at https://github.com/android/platform_frameworks_base/blob/master/core/java/android/webkit/CacheManager.java#L60

Mobile Team's Goals

Quickly restore webpages after fennec restarts

On Android, when Fennec is placed in the background, we have a high chance of being killed by the OS. This is so that foreground applications have more memory available and does improve system performance. For applications, this means that they need to store out state before they die. Currently, we do not do an adequate job of saving state out resulting in a pretty terrible experience when you 'switch back' to Fennec.

- When you say "killed", are we gracefully shutting down? If not we're probably blowing away the cache (we do that unless we quit cleanly). jduell

- "Killed by the OS" is not shutting down gracefully. (mfinkle)

- Can we use the onPause event to flush _CACHE_MAP_ to disk, in the hopes of being able to do that before we get killed? (hurley)

What we need to do is to be able to 'pin' all content that has been currently loaded, then when Fennec restarts have away to just load that from cache without issuing a 302.

- What do you mean "without issuing a 302"? If the content is in cache (which it's likely to be if if was loaded recently, which seems likely in a low-tab environment like fennec), you'll just load it from cache, no? jduell

AppCache/Pinning

Similar to the system I mentioned in (1), we need a way to pin content for long periods of time. The idea is to load that page into the browser, and somehow mark it so that it will not be evicted. The Fennec application would be in control of when a page (and related content) is pinned and when it can be evicted.

- The general solution to this is cache priorities, but we could probably knock off a faster solution that allows simply marking a cache entry as "pinned": it would of course be up to you to make sure you eventually evict these when needed. Open a bug and cc me if you want it. jduell

The reason we need something like this is that Stuart's has been discussing replacing large parts of the Fennec UI with content that is served from mozilla.com. For example, the "awesome screen" in Fennec might just be a https://home.mozilla.org load. When Fennec starts up, that is the first thing that they will see -- so having no network latency hit would be required.

- A faster (but less modify-able on the fly) way to do this is presumably to bundle a web page with fennec and load that (as a resource:// URL?) rather than pin in the cache. But then you can't simply update the web server to modify the page--it would be fixed until the browser is updated. But note that unless you cache the home page with a fixed expiration that doesn't require validation, we'll wind up hitting the network to revalidate, which is probably not a win. jduell

Issues Raised by Mobile Team

Writes on the main thread

Any panning performance issue is not acceptable. We know that writing on the main thread in the parent process will cause some devices to block. If the user is doing a pan during this write, it will appear that the world has frozen.

- AFAIK the cache no longer does any writes on the main thread. If you know otherwise, please let the necko team know. jduell

- We have no data that says main thread I/O is happening. This is just a pre-req, not blame of a known situation. If I/O is not on main thread, the world is a better place. (mfinkle)

- All data points to no writes happening on the main thread (hurley)

Limited FS space

Cacheing on the internal memory of a device will be limited by its small size. For example, my Nexus S (which is a newer higher end Android device running 2.3) has 16gb of storage (iNAND). 1gb is used for internal storage. The other 15gb (or so) is used as external storage. Interestingly (and somewhat unrelated), you can't remove the external memory physically. However, you can mount the memory via USB on your PC. In this case, the external memory is not available to applications

I think in the short term, we just disable the cache if the user doesn't have an external. We also should ensure that we don't die if the disk cache location because unavailable while we are running.

Maybe in the longer term, we could investigate two disk cache locations, but I don't think I care that much about it now.

For every web page retrieved over SSL, the cache saves a complete copy of the certificate chain (usually a few KB) with the metadata of the page. Reducing this overhead by writing each cert chain to disk just once might become worthwhile.

PSM caches SSL intermediate certificates, and can be set to cache some CRLs in some configurations, and will probably cache OCSP responses and many more CRLs soon. If the PSM cache isn't regulated, it could easily reach several (dozens of ) megabytes because of the CRLs.

What kind of storage space do we want to use in the various situations/what has been deemed an "acceptable" maximum cache capacity? We currently use up to 1G on desktop (depending on amount of free space on disk). Keep in mind, smaller caches will be more likely to evict entries that might be useful later on. (hurley)

Performance (wifi / cell) (As measured before I/O was off the main thread)

I do not have alot of evidence supporting this, but I wanted to mention it. I did some simple experiments enabling the disk cache on wifi and on a cell (3g) network. What is interesting, is that on a fast network, the disk cache made no different and may have slightly hurt page load perf. While on a 3g network, it was a noticeable (~13% iirc) win. This isn't conclusive, but we should investigate this and determine if runtime-switching of the disk cache makes any sense.

- We should investigate why it's a loser on wifi and fix it. jduell

- It doesn't seem to be a loser on wifi now that I/O is off the main thread (hurley)

Effect of Cache Size

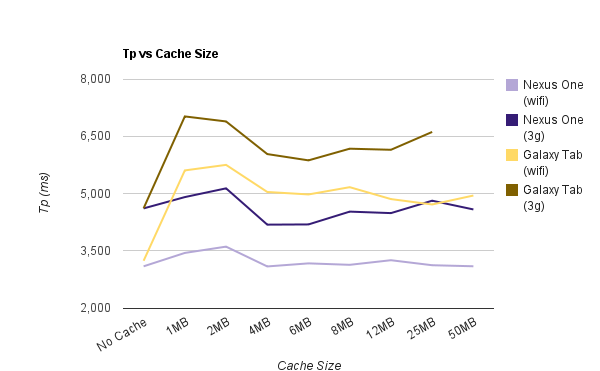

Tested the affect of changing the cache size on Tp pageload results. Galaxy Tab filesystem is so bad, cache is never a win. Nexus One has a sweet spot around 4MB - 6MB on wireless (3g), where the win is just over 9%.

These tests started from bug 645848 (see comment 5). I collected some more data using different cache sizes and put the results in a Google Spreadsheet.