User:Rnewman/TreeSync

Scope

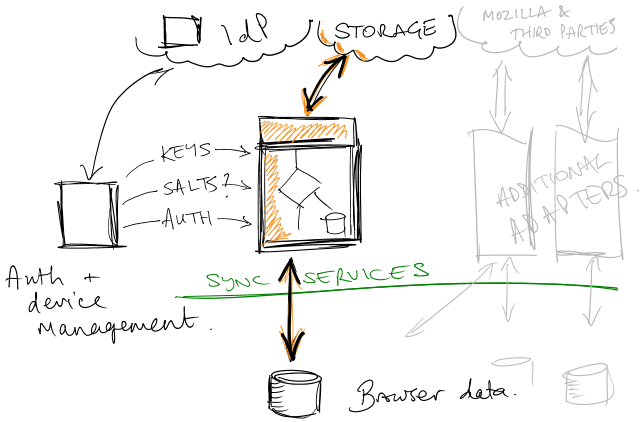

This document attempts to outline one approach for tackling the middle part of the above sketch via a storage protocol and scheme for exchanging structured and semi-structured data. Building alongside a working account system and existing profile storage, we define a storage concept that addresses many of the fundamental flaws in the existing Sync design, and also looks ahead to known future requirements.

Dependencies

We assume the existence of an account system that can control access to storage, as well as providing three things:

- One or more key chains rooted in user-entered credentials, culminating in "bulk" symmetric keys. Storage can ask for a key to encrypt data. (Some of these keys can be stored, wrapped, in the storage system itself.)

- The ability to authenticate with the storage system (e.g., through auth tokens).

- Device management to allow for version negotiation.

Additionally, we need a source of salts for hashing. This can be the client itself or one of the services.

Requirements

We take as our starting point the non-functional requirements at <https://services.etherpad.mozilla.org/2019>:

- Resistant to interruption

- Offlineable

- Support client reconciling without data loss

- Bandwidth-friendly

- Concurrent-safe

And add some obvious ones:

- Support structured and unstructured data efficiently

Concepts

- Content-addressable object storage. Clients can upload records independently from declared state changes. If a record already exists in that state in known history, no upload is required. This reduces the surface area for conflicts (the bulk of upload occurs independent of a 'commit'), and minimizes the need for atomicity to a single operation.

- Isolation of ref/structural updates. This has obvious benefits for minimizing cost in structural changes, but also reduces the incremental expense of detected conflicts (upload only the things that changed).

- Clear pseudo-transaction boundaries: updates of refs. This allows for server-side garbage collection, some degree of historical versioning (useful for reconciling), and easier debugging -- the server isn't just a current skin on top of an object soup.

- Arbitrary granularity and explicit structure: hierarchical structure can be applied to join together related records into an atomic unit. Introducing more roots allows for concurrent changes. Clients can, in principle, track only certain sub-trees. Clients can trivially have knowledge of structure without having to handle records; for example, a mobile client could have structural knowledge of your entire bookmark tree without storing every bookmark locally, fetching subtrees as needed.

- Per-record access is feasible or trivial, depending on incremental feature usage.

Stage 1: whole records

A record is an atomic unit: a bookmark, for example. A record has public fields (GUID, hash, collection) and private content. A value-identical record -- one with the same content -- has the same hash.

A tree is a unit of structure, either explicit (a bookmark structure) or implicit (a collection of history records). All records are tied to a collection root by a structure of trees. The root of a collection is called a ref.

Trees refer to records by hash. Trees can be hashed -- their content is the structure.

Two clients with the same records and structure will have the same root hash.

All hashes are salted, which prevents record identification attacks.

When a client has a change to upload, it:

- Uploads the new records

- Uploads new versions of the entire tree structure affected by the change (proportional to depth)

- Atomically updates the root ('ref')

This seems expensive -- why not identify items in the structure by GUID, which would minimize the number of structural updates? The answer is that doing so provides no mechanism for rooting, managing, and tracking changes. If the same exact tree could identify two states, then another mechanism would have to be introduced to track and consolidate each client's changes -- a transaction, essentially.

Each time a change is uploaded, it is tagged with a generation.

Each time the root is updated, the new root is accorded a new generation, and a reference is recorded to the previous root.

If the current root has changed out from under the uploading client, the update fails, and the client must reconcile and retry.

Trees refer to their earliest subtree generation. This is used for garbage collection by clustering records; once the current tree doesn't refer back to an old generation, the old generation can be completely discarded.

When a client requests new changes, it walks from its last known change to the current root, fetching altered trees, fetching altered records, constituting a new state locally, and then making the appropriate local changes. When it's done, it tracks the new ref.

In order to facilitate GC, clients could inform the server of their current state; that way, the server knows how far back changes need to be kept to support offline clients. This is optional.

Of course, the current tree includes all current state, and pointers back to all trees and records; that's all the server needs to keep. But the more history we replay, the more likely it is that accurate conflict resolution is possible.

Stage 2: incremental

Option A

We can avoid uploading whole records. Instead, upload a change: a reference to the previous content hash, and the changes. This reduces the amount of data uploaded for larger records, and is very efficient for clients that are able to track closely against each other. It's less efficient in other ways: it requires clients to process the entire change sequence to reach the current state, it removes the ability for clients to recognize identical records by their hash (because the object in this case is a change, not an object), and it leaves a trail of garbage.

Option B

But what if we identify changes by their entire object hash -- just as if we uploaded the whole object, but with the contents replaced by a delta? That buys us all of the collision benefits, while still bearing the costs of chaining.

Fetching individual objects

Remember that records are marked with their GUID. A per-object change tree can be easily retrieved by cross-reference. For non-incremental storage, this will consist of whole records, and can be limited to the most recent generations (subject to some complexity in the case of parallel timelines). For incremental storage this will be essentially a transaction log. This will be more costly for clients to process than in Sync 1.1, but does offer an advantage: the ability to use this in-place history to reconcile local changes more accurately than "here's the server record" allows.

To accurately compute the current state of any record involves examining the current tree, unless clients request a generation bump for dependent records on ref update.

Note that trees don't strictly need to be encrypted; they reveal some opaque structure. That would allow the server to do a large amount of this work, rather than the client; for example, the server could traverse the current tree to find the latest version of an object.

Terminology

- Ref

- an identifier which refers to a particular object via content hash.

- Object

- a blob of JSON with an encrypted part ("body") and a public part ("envelope").

- Record

- a kind of object whose body refers to a domain entity.

- Tree

- a kind of object whose body is a structured set of objects, identified either by ref or by hash.

- Collection

- a named ref in the global namespace. Collections are an enclosing scope.

Breaking the chain

How do we achieve the following goals:

- "Rooting" of all objects for garbage collection purposes

- Discoverability of roots (e.g., Places roots/top-level folders)

- Transactional behavior where it counts

without having to modify the entire chain of trees, right up to a single global root, when we modify a leaf?

The answer, I think, is to generalize the concept of a ref to be an entity that is referred to by name, not by value (content hash).

Each collection is a root. Its children could be ordinary hash-addressed values, if we wish to mutate the entire collection consistently. Or its children could be a set of refs. Each ref is a standalone tree; as its contents change, other trees that refer to it can remain unchanged.

Each Places root could be considered a ref, and we can even go so far as to introduce folders by reference.

This opens the door to partial tree synchronization: clients are at liberty to synchronize any individual ref, without even considering the structure of the rest of the collection, let alone values.

So how do we model events like bookmark moves between roots? With care. This is analogous to a move across a filesystem boundary: it's a copy followed by a delete. We have several options:

- Versioning or otherwise evolving the ref records themselves to denote a dependency on a particular version of another ref. ("When you update the toolbar, be sure to also update mobile bookmarks".)

- Performing the two ref updates within the same request and server-side transaction. (This takes care of writes but not necessarily reads.)

- Trusting to luck.

And what about unsorted flat collections? With the assumptions of all items in a collection being named by reference, and the collection being flat, this approach is equivalent to the Sync 1.1 system, but with the ability for clients to manage the set of included items by explicit listing.

So what's the relationship between GUIDs and refs? Surely it makes sense for these to be the same, and for a record with a GUID to implicitly be made available by reference?

Yeah, probably. But there's a difficulty: what about two different records with the same GUID?

Perhaps we allow three kinds of identifiers:

- Refs: names managed by the server.

- GUIDs: names managed implicitly by clients.

- Hashes: content-addressing.

Garbage collection

Assumptions

- It's OK to collect objects that aren't referenced by the current ref version. Clients can reupload collected objects if they're needed later (modulo race concerns), and clients don't have to walk history (they can merge with the current tree at the loss of some granularity).

- But: we might not wish to collect all unreachable objects, because it can save client effort, and the longer the stretch of history the more likely it is that a client can follow a history chain. The primary purpose of GC is to *eventually* clean up, not reach zero overhead. (In that respect this is much like TTLs in Sync 1.1.)

- Partial writes are likely to be resumed or completed, and thus mid-stream garbage should be relatively insignificant (and can be removed by the interrupted client). Most garbage will be due to unreachable old data.

Three options

- Reference counting

- Mark and sweep

- Generations

Reference counting would involve transactionally updating counts for all referenced objects when a ref revision is set or garbage collected. I think that rules it out, but it would allow for accuracy wrt whether a record is needed for a particular ref revision.

Strict generation collection is essentially equivalent to reference counting: all records reachable from each ref revision are numbered with that revision's version (where the version is a server 'clock'). To do so involves touching each of those objects for every ref set, which is also undesirable.

Mark and sweep delays this work until GC time: at GC time we'd walk the current revision's tree, look at the entire record set, and collect unreachable records.

Lazy generation collection achieves an approximately time-based collection approach by decoupling record generations from ref revision generations: new records are given the generation of the forthcoming ref revision, or an auto-incrementing version number (which can be as granular as +1 per record), and trees refer to the lowest version of any of their children (which implies a bottom-up upload).

All objects with a version lower than the current ref revision's tree's minimum generation number can be automatically collected. The longer the stretch between the current and the oldest version, the more reusable objects stick around, but the greater the space usage.

Efficiency can be improved by bumping reused records' versions when trees are updated, which is like incremental marking.

Flaws in this approach

- If you have one, old, untouched bookmark tree, all of its siblings that come and go will have higher version numbers, and won't ever be collected. For bookmarks this is unlikely to be a problem. There are solutions to detect garbage and force it to be exposed: e.g., tracking subtree counts to allow % garbage to be detected, and explicit re-marking of old records (requires touching trees).

- There is a race between GC and new tree references. However, we don't expect clients to have an accurate set of known server records (and if they do, they can be GC-aware), so this is unlikely to be an issue in practice. A client that knows it's about to reuse a record can bump its generation number without reuploading the whole record, then upload new trees accordingly.

Algorithm

- Upload

-

- If necessary, discover or bump existing leaf object version numbers from server.

- Submit new leaf object references to the server. Record the lowest returned version number for each set of records contributing to a tree.

- Submit new trees, each referring to their lowest version number. Each tree will be assigned a version number itself, which will be used in the next layer of trees.

- Update root ref to topmost tree. It'll be given the new version number. Subsequent object uploads will receive the next generation number.

- Garbage collect

-

- Look at current ref, or earliest desired ref version (e.g., the earliest known ref for your client set), and find their lowest used version number.

- Delete all records with a lower version number.

- Bump

-

- Find each tree with a too-old lowest version.

- Walk each tree to find leaf objects hashes.

- Request that each of a set of hashes be allocated a new version number.

- Either by client action or through server support, update lowest-version for each modified tree.

Open questions/flaws

- How do you prevent garbage-collection races between the server and client? Example: the client doesn't re-upload an item because it thinks the server already has it, but the server has meanwhile decided to garbage-collect that item.

- What's the wire protocol like?

- How can we do this efficiently on the server?

- There are some potential areas where races or partial writes can still occur:

- Meta changes: keys, salts.

- Adding new collections and roots.

- Cross-collection changes.

- We need to consider approaches to these -- maybe don't do cross-collection stuff, use intermediation to allow for swapping out whole storage regions, just as we do with trees, have atomic operations for some meta work?

- Version negotiation. Do collections, trees, objects need to be versioned, or do we plan to do full reuploads? Can we support multiple versions at the same time to speed up re-syncing for new devices?

- What's the model for deletions? Present in previous tree but not in this one? If so, we need to be very sure that fresh reconciling is done correctly, or sentinels are left for way-behind clients.

- What about TTLs and expiry?