Accessibility/Video Codecs

< Back to main video accessibility page

Introduction to Video

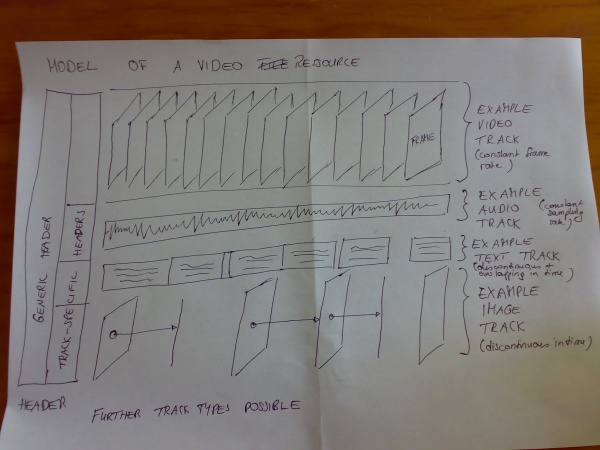

A video consists of multiple tracks of data temporally multiplexed (or: interleaved) with each other such that at any point in time the data from all tracks relevant to that time is not "far away". To be a video, there has to be at least a video track and a audio track. There can be, however, multiple audio tracks (e.g. multiple languages), multiple video tracks (e.g. different camera angles), multiple timed text tracks (e.g. subtitles in different languages), and other tracks, such as timed images (e.g. slides of a presentation) or lighting information for a concert etc.

To multiplex these multiple tracks of data together into one file (or stream), there is a container (or encapsulation format).

To compress high bandwidth content such as video or audio into a smaller file, there are codecs (encoder/decoder).

Thus, the simplest video with a caption consists of a container, a video codec, an audio codec and captions that have been multiplexed as a timed text codec.

As an aside: captions (or subtitles) can also be created for videos by "burning" them into the video. However, to re-access such captions, e.g. in order to do searches, requires OCR. In modern digital times, that is not a preferred way of dealing with captions and will not be regarded here.

Software support

Desktop environments support video codecs through media frameworks. A media framework is a piece of software that provides media decoding (and sometimes encoding) and rendering support to application programmers. A media framework typically consists of a set of filters that are put together into a filter network to perform the task.

For example, to decode a Ogg Theora/Vorbis video file, you would need filters to read the file, strip off the Ogg encapsulation, de-multiplex the codec tracks, decode the Theora video, decode the Vorbis audio, send the decoded video to a given display area, and the decoded audio to the sound device, and make sure the synchronisation is still given.

Media frameworks are usually very flexible in their codec and container format support, because filters are built such that they can easily connect to each other. Also, it is easy to extend support for a new container format or codec by adding the libraries for these formats to the system and adding a plugin to the framework that adds the filters.

It is through the OggCodecs filters that the Microsoft Windows DirectShow media framework receives support for Ogg Theora.

It is through the XiphQT components that the Apple QuickTime media framework receives support for Ogg Theora.

| Desktop | Framework |

|---|---|

| Windows | DirectShow |

| Mac OS X | QuickTime |

| Gnome | GStreamer |

| KDE | Phonon |

Choosing a Caption Format

To encapsulate a data stream of a given codec into a container, you require a container-specific multiplexing rule (also called a "media mapping" in Ogg). The multiplexing rules need to be supported by tools, frameworks, and applications - in particular video players - for both, encoding and decoding.

In theory, given a multiplexing rule, you can put any video codec and any captioning format in any container. However, multiplexing rules don't exist for all codec/container combinations.

Instead, video codecs tends to have a conventional native container and the choice of video codec dictates the container and audio codec to use. By choosing Ogg Theora as a baseline codec, the native container format is Ogg and the native audio codecs are one of Vorbis, Speex, or FLAC.

| Container | Codecs | Authoring tools | Natural captioning format |

|---|---|---|---|

| Ogg |

|

CMML, Kate (OGM: SRT) | |

| MP4 | H.264 | 3GPP Timed Text | |

| .flv | VP6, H.264 | W3C TT | |

| WMV | WMV9/VC-1 |

The Flash plug-in effectively provides a specialized playback framework for .flv and .mp4 containers. Gecko embeds an Ogg-specific playback framework called liboggplay. It only supports the Ogg container format.

In theory, different containers also have different conventional timed text formats. MPEG-4 for example supports W3C TimedText, while Flash supports a proprietary CuePoints format (http://www.actionscript.org/resources/articles/518/1/Creating-subtitles-for-flash-video-using-XML/Page1.html) and a simple version of W3C TimedText (http://livedocs.adobe.com/flash/9.0/ActionScriptLangRefV3/TimedTextTags.html).

While the situation is fairly fixed for codecs inside the Ogg container (more from a political/legal standpoint rather than technical, actually), Ogg hasn't chosen one conventional native caption codec format yet. Instead, the Ogg community has experimented with several formats, amongst them CMML and Kate.

Another project called OGM actually branched the Ogg codebase and implemented support for a wide range of proprietary and non-free codecs. For example, OGM files most often carry video encoded in the MPEG-4 ASP format and audio in Vorbis or AC-3 together with subtitles in SRT, SSA or VobSub format.

Ogg Background

Ogg is a container format for time-continuous data, which interleaves audio and video tracks and any other time-continuously sampled data stream into one flat file.

Ogg is being developed by Xiph.org. Xiph.org have not made a decision yet on how subtitles and captions are best to be supported inside Ogg.

Ogg itself supports CMML, the Continuous Media Markup Language which is HTML-like and includes support for hyperlinks. It has been considered for captions and subtitles, but the specifications are still in development.

It has to be re-assessed under the current accessibility requirements whether CMML or an extended version of CMML is a useful solution to accessibility problems. A very interesting implementation and use of many of the CMML/Annodex ideas is MetavidWiki (see http://metavid.ucsc.edu/), which is an open source wiki-style social annotation authoring tool.

Further, Ogg recently added Kate, a codec for karaoke with animated text and images. Kate defines its own file format for specifying karaoke and animations. Experience from the implementation of Kate needs to be included in an accessibility solution for Ogg.

There is currently no implementation of W3C Timed Text (TT) for Ogg or of any of the other subtitling formats.

What is involved with creating a caption format for Ogg

Definition of inclusion of a text stream such as W3C TT into Ogg requires a so-called media mapping:

- definition of the format of the codec bitstream, i.e. what packets of data are being encoded and how do they fit into Ogg pages

- definition of the format of the codec header pages

This enables multiplexing of the data stream into the Ogg container.

At this stage we are not clear which format to use. The solution may be found in one format that supports all the requirements, or in multiple formats with the provisioning of a framework for how to implement and interrelate these formats with each other. We may potentially need to cover new ground, create a new specification and promote it into the different relevant communities. Or the best solution may be to support one of the existing subtitling formats and extend it to cover other accessibility needs.

Also, there needs to be a recommendation for how to display the different text codecs on screen, such that a standard means of display for the different types is achieved.

For example: audio annotations - when they are not given as an additional audio track, but rather as text - are not to be displayed as text, but rather be rendered through a text-to-speech with live region semantics so that assistive technologies are notified when there is new text. The user can then configure their assistive technology to show the text via text to speech or on a Braille display. For text-to-speech, the user could also decide what the speech rate should be, and send it to another channel. This means that users could hear audio descriptions at a high TTS rate through a headset and other watchers wouldn't necessarily hear them.

As for the implementation of support for a new text codec, there is a whole swag of software to be extended.

To enable support for a text codec in Firefox requires an extension to liboggz, liboggplay and to the Mozilla adaptation code. The adaption code is in the implementation of the WHATWG HTMLMediaElement, HTMLAudioElement and HTMLVideoElement. Basically, the implementation of those DOM objects uses liboggplay functions to decode the data and get the video and audio data. So liboggplay would need functions to extract the decoded caption information. As well as the liboggplay support there would need to be some way of getting the information from the web developer side. That is, DOM methods or events. Implementing those would use the liboggplay functionality that would be added.

To enable support for Desktop use requires support in the OggDSF DirectShow filter, in the XiphQT Quicktime components, in mplayer, vlc, gstreamer, phonon, and xine. Support of these media frameworks has a follow-on effect in that it also creates support for video players, video applications, and Web Browsers that rely on native platform media frameworks to decode video such as Safari.

To enable authoring requires support for ffmpeg, ffmpeg2theora, and further GUI authoring applications to be determined.