QA/Automation/Projects/Mozmill Automation/On Demand Test Framework

Overview

(need to expand this, this is not at all final wording)

The On-Demand Test Framework will be a full-service execution framework for Mozmill and perhaps eventually other tests. It will allow execution of tests across a grid of machines. Eventually, it will include auto-provisioning that grid and features to allow more granular parallel execution.

| Name: | On-Demand Test Framework |

| Leads: | Geo Mealer, Dave Hunt |

| Contributors: | TBD |

| Pivotal: | https://www.pivotaltracker.com/projects/404111 |

| Bugzilla: | https://bugzilla.mozilla.org/show_bug.cgi?id=698654 |

| Other Docs: | Index |

Goal History

| Period | Status | Goal |

| 2011 Q4 | [ON TRACK] | Implementation of a remote mechanism to trigger Mozmill functional and update tests for Firefox releases |

Requirements

- We want to be able to kick off test runs with as little interaction and micromanaging as we can.

- A test run equates to a script with one or more parameters, which will be run on one or more environments. These parameters correspond mostly to the test matrix, and will vary per run type.

- The test run is capable of, within a given environment, doing everything from app deployment to testing to app cleanup. Or we can split these up if more convenient.

- We'll want the system to spread testing among a number of available machines per environment, and ultimately create the machines as needed.

- Ultimately, we'd like the ability to slice the test run on a parameter value to run in parallel. If we can get it, understanding how to slice the test run per-test would be even better.

- We'd like to clearly monitor run progress, and watchdog for hung machines.

- We'd like to see understandable test results, preferably consolidated across a whole run (at least per-env, if not cross-env).

Project Milestones

| Milestone | Status | Description |

| Basic Execution | Design Stage

[ON TRACK] |

System allows execution of test-run demands against standing machines. |

| Auto-Provisioning | Not started | System automatically provisions and/or resets virtual machines |

| Parallelism | Not started | System knows how to slice up a test run more finely than per-platform |

Architecture

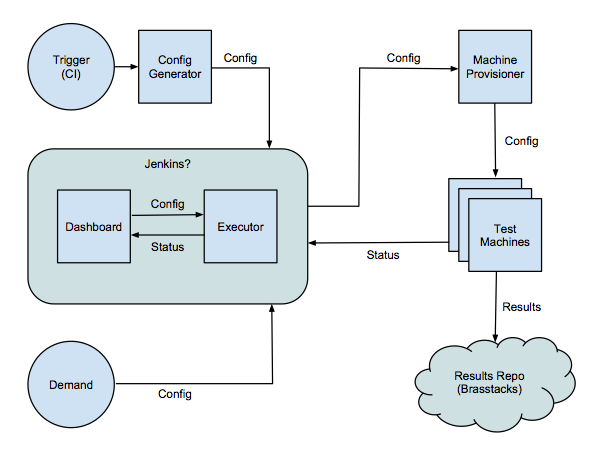

The Trigger and Config Generator components are a proposed part of integrating the CI goal into this system by automatically generating a Demand, and will not be covered in this project.

| Component | Description |

| Demand | User-facing interface for demanding a one-time test run |

| Config | Standardized configuration document for describing a test run |

| Dashboard | User-facing interface for tracking run status |

| Executor | Kicks off and centrally monitors the test run |

| Provisioner | Initializes appropriate machines for execution |

| Machine | Environment on target machines for remote execution |

If an off-the-shelf CI tool like Jenkins turns out be appropriate for our needs, it may subsume the Dashboard and Executor components.

The question of which module controls/watchdogs the individual Test Machines performing the run has not been answered yet. Further analysis will show whether that's better as part of the Executor, Provisioner, whether the machine can run relatively autonomously once it's been initialized, or whether a new component is needed.

In addition to these, Status formats must be defined where they exist in the architecture. It is unknown yet as to which module will define these, or whether they will be significant enough to require subprojects.