QA/Content Handling

Revision History

This section describes the modifications that have been made to this wiki page. A new row has been completed each time the content of this document is updated (small corrections for typographical errors do not need to be recorded). The description of the modification contains the differences from the prior version, in terms of what sections were updated and to what extent.

| Date | Version | Author | Description |

|---|---|---|---|

| 05/24/2016 | 1.0 | William Hsu | Initial draft |

| 09/12/2016 | 1.1 | Adrian Florinescu | Updates |

| 09/22/2016 | 2.0 | Adrian Florinescu | Added Feature (Sub)sections chapter and table |

Overview

Purpose

Detail the purpose of this document. For example:

- The test scope, focus areas and objectives

- The test responsibilities

- The test strategy for the levels and types of test for this release

- The entry and exit criteria

- The basis of the test estimates

- Any risks, issues, assumptions and test dependencies

- The test schedule and major milestones

- The test deliverables

Feature (sub)Sections

This section is meant for features that are to be implemented in several iterations, hence landing and releasing parts of it before completion will be a reality. While the main TestPlan is to be updated and followed for the full feature, it makes more sense to have particularised TestPlans for each small iteration and the data which will be relevant for those will not be necessarily relevant for this test plan which is meant to cover the full feature.

| Ref | Subsection | (sub)Metabug - if available | Landed Date | Version Landed | Train Progress | TestPlan | QA Owners |

|---|---|---|---|---|---|---|---|

| 1 | Download Summary | 1300947 | 2016-08-02 | 51.0a1 | 51.0a2 | DownloadPanel DropMarker Test Plan | QA Eng Team |

| 2 |

Scope

This wiki details the testing that will be performed by the project team for the Content Handler project. It defines the overall testing requirements and provides an integrated view of the project test activities. Its purpose is to document:

- What will be tested

- How testing will be performed

Ownership

• EPM (Manager: Vance Chen)

• UX (Manager: Harly Hsu)

- Bryant Mao

- Carol Huang - Visual Designer

- Morpheus Chen

• Front End (Manager Evelyn Hung)

• Platform (Manager: Ben Tian)

• QA (Lead: Brindusa Tot) • QA

- Adrian Florinescu (Feature owner)

- Ovidiu Boca

- Roxana Leitan

- Simona Badau

Testing summary

Scope of Testing

In Scope

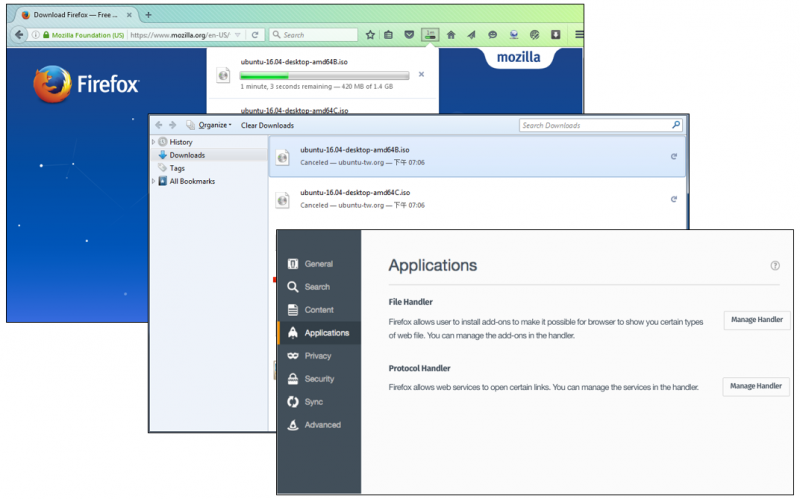

In this project, we intend to improve the content handling experience of Firefox Desktop. The enhancement includes scenarios such as “open with” dialog, a file is saved (download panel), the way dealing with that file (content handling, in pref. → App.), and relevant platform side design refactoring. Therefore, the testing effort for Content Handling will be invested on the following areas:

- Release Acceptance testing

- Compatibility testing - Backward compatibility

- Continuous integration

- Destructive testing (Forced-Error Test)

- Functional vs non-functional (Scalability or other performance) testing

- Performance testing

- Regression testing

- Usability testing

Out of Scope

Following areas/features are considered out of scope and will not be considered as testing zones to be handled in this test plan.

- Accessibility testing (UX will deal with it)

- L10N test (L10n team will deal with it)

- Scan security hole (Security team will deal with it. If any patches exist security concern, we will add "sec-review" flag on the bug)

Requirements for testing

Environments (TBC)

- Linux

- OS X 10.10

- Windows 7 x 32

- Windows 10 x64

Test Strategy

Test Objectives

This section details the progression test objectives that will be covered. Please note that this is at a high level. For large projects, a suite of test cases would be created which would reference directly back to this master. This could be documented in bullet form or in a table similar to the one below.

| Ref | Function | Test Objective | Evaluation Criteria | Test Type | Owners |

|---|---|---|---|---|---|

| 1 | Download process | Verify download process | 1. Pop out download starting notification when a new download request is triggered. 2. There is no Opening dialog. |

Manual | Eng Team |

| 2 | Download panel :: Overview | Verify the number of maximum download items displayed | Max numbers of download items displayed at the same time: 5 | Manual | Eng Team |

| 3 | Download panel :: Summary section | Verify the summary section for Multiple Files information in Downloads Panel | 1. New Icon in summary section 2. Format change of downloading/completeness status 3. ETA format is changed to Xm XXs left 4. Show the overall network speed. *UX spec, https://mozilla.invisionapp.com/share/4Y6ZZH1E8#/screens/156737043 |

Manual | Eng Team |

| 4 | Download panel :: Show All Downloads | Verify "Show All Downloads" session in Downloads Panel | 1. - A new button for dropdown menu to display the three new features. - Clear List - Open Downloads Folder - Download Settings - Display “Show All Downloads” section when the mouse hover on the summary section. This only happens when the extra summary section is shown. *UX spec, https://mozilla.invisionapp.com/share/4Y6ZZH1E8#/screens/156737044 |

Manual | Eng Team |

| 5 | Download status | Verify all download status | The download status information for each item in download panel is redesigned. Here are new statuses. - Starting - Downloading - Complete - Pause - Cancelled - Failed - Deleted |

Manual | Eng Team |

| 6 | Downloads remaining time | Verify downloads remaining time on the downloads button | The remaining time on download indicator is removed | Manual | Eng Team |

| 7 | Animation and downloads remaining time | Verify animation and downloads remaining time on the downloads button | 1. The remaining time on download indicator is removed 2. An animation to notify a user whenever a download is completed while a user is downloading multiple files. 3. Show an alert icon on the downloads button when a download fails |

Manual | Eng Team |

| 8 | File handling flow | Verify the new UI and flow | 1. While a non-previewable link is clicked, no Open/save dialog is popped up 2. The add-on or plugin is the default handler after installation if it registers as a content handler |

Manual | Eng Team |

| 9 | Protocol Handling Flow | Verify the new UI and flow | Pop-up possible protocol handlers or request OS to handle it, while click a protocol link has no default handler | Manual | Eng Team |

Builds

This section should contain links for builds with the feature -

- Links for Nightly builds: TBC

- Links for Aurora builds: TBC

- Links for Beta builds: TBC

Test Execution Schedule

The following table identifies the anticipated testing period available for test execution.

| Project phase | Start Date | End Date |

|---|---|---|

| Start project | 03/28/2016 | TBC |

| Study documentation/specs received from developers | 05/20/2016 | 05/27/2016 |

| QA - Test plan creation | 05/24/2016 | 05/27/2016 |

| QA - Test cases/Env preparation | 05/20/2016 | 05/27/2016 |

| QA - Nightly Testing | TBC | TBC |

| QA - Aurora Testing | TBC | TBC |

| QA - Beta Testing | TBC | TBC |

| Release Date | TBC | TBC |

Testing Tools

Detail the tools to be used for testing, for example see the following table:

| Process | Tool |

|---|---|

| Test plan creation | Mozilla wiki |

| Test case creation | Test Rail |

| Test case execution | TBC |

| Bugs management | Bugzilla |

Status

Overview

Track the dates and build number where feature was released to Nightly Track the dates and build number where feature was merged to Aurora Track the dates and build number where feature was merged to Release/Beta

Testing risks and mitigation

TESTING RISK

Risks can be organized into these categories.

- Test planning and scheduling : It may occur when there is no separate test plan, but rather highly incomplete and superficial summaries in other planning documents. Also, test plans are often ignored once we are written. Regarding the schedule, the schedule of testing is often inadequate for the amount of testing that should be performed in TDC, especially when testing is primarily manual.

- Stakeholder involvement : The wrong mindset would introduce wrong thought of testing, having wrong testing expectations, and having stakeholders who are inadequate committed to and supporting of the testing effort. Therefore, we must align expectations with reality between stakeholders before we kick off testing.

- Process integration : It often occurs when testing and engineering processes are poorly integrated. We sometimes take a "one-size-fits-all" approach taken to testing, regardless of the specific needs of the project.

- Test communication risk : This problems often occurs when test documents are not maintained or inadequate communication.

RISK MITIGATION

QA team would like to use following flow to address risk.

- Risk Identification: Risks can be identified using a number of resources. E.g., project objectives, risk lists of past projects, prior knowledge, understanding of system architecture or design, prior bug reports, and complaints. For example, if certain areas of the system are unstable and those areas are being developed further in the current project, it should be listed as a risk. It is good to document the identified risks in detail so that it stays in project memory and can be clearly communicated to project stakeholders.

- Risk Prioritization : If a risk is fully understood, it is easy for us to prioritize a risk by two measures. (1) Risk Impact and (2) Risk Probability are applied to each risk. Risk Impact is estimated in tangible terms or on a scale (e.g., 10 to 1 or High to Low). Risk Probability is estimated somewhere between 0 (no probability of occurrence) and 1 (certain to occur) or on a scale (10 to 1 or High to Low). For each risk, the product of Risk Impact and Risk Probability gives the Risk Magnitude. Sorting the Risk Magnitude in descending order gives a list in which the risks at the top are the more serious risks and need to be managed closely.

- Risk Treatment : Each risk in the risk list is subject to one or more of the following Risk Treatments.

- Risk Avoidance : For example, if there is a risk related to a new feature, it is possible to postpone this feature to a later release.

- Risk Transfer : For example, if the risk is insufficient security testing of a feature, it may be possible to borrow the other expertise (Engineer) to perform the security testing.

- Risk Mitigation : The objective of Risk Mitigation is to reduce the Risk Impact or Risk Probability or both. For example, if the QA team is new and does not have prior system knowledge, a risk mitigation treatment may be to have a knowledgeable team member join the team to train others on-the-fly.

- Risk Acceptance : This happens when there is no viable mitigation available due to reasons such as resources. For example, if all testers are at the same place, risk acceptance means no another QA resource. When holiday comes, some tests will be stopped and it may be a concern in the project.

References

- UX Specification : https://mozilla.invisionapp.com/share/4Y6ZZH1E8

- MANA : https://mana.mozilla.org/wiki/display/PM/Content+Handling+Enhancement

- QA test strategy: https://docs.google.com/document/d/1YK5ePlHa3QfvaVhF4eYy922CmAt1Hkw3cWbYaOZm9AI/edit?usp=sharing

- Meta: Bug 1269956 [meta] Firefox Download Panel UX Redesign

Testcases

Overview

- Test cases on Google doc

Note: UX spec is not yet finalized, and test cases are waiting for review.

Therefore, test cases will be made public later. Sorry for any inconvenience!

Test Areas

| Test Areas | Covered | Details |

|---|---|---|

| Private Window | YES | |

| Multi-Process Enabled | YES | |

| Multi-process Disabled | YES | |

| Theme (high contrast) | NO | |

| UI | ||

| Mouse-only operation | YES | |

| Keyboard-only operation | NO | |

| Display (HiDPI) | NO | |

| Interraction (scroll, zoom) | YES | |

| Usable with a screen reader | N/A | e.g. with NVDA |

| Usability and/or discoverability testing | YES | UX team will help with it |

| Help/Support | ||

| Help/support interface required | TBD | Make sure link to support/help page exist and is easy reachable. |

| Support documents planned(written) | TBD | Make sure support documents are written and are correct. |

| Install/Upgrade | ||

| Feature upgrades/downgrades data as expected | N/A | |

| Does sync work across upgrades | YES | |

| Requires install testing | YES | separate feature/application installation needed (not only Firefox) |

| Affects first-run or onboarding | N/A | |

| Does this affect partner builds? Partner build testing | N/A | |

| Enterprise | Raise up the topic to developers to see if they are expecting to work different on ESR builds | |

| Enterprise administration | N/A | |

| Network proxies/autoconfig | N/A | |

| ESR behavior changes | N/A | |

| Locked preferences | N/A | |

| Data Monitoring | ||

| Temporary or permanent telemetry monitoring | N/A | List of error conditions to monitor |

| Telemetry correctness testing | N/A | |

| Server integration testing | N/A | |

| Offline and server failure testing | N/A | |

| Load testing | N/A | |

| Add-ons | If add-ons are available for testing feature, or is current feature will affect some add-ons, then API testing should be done for the add-on. | |

| Addon API required? | N/A | |

| Comprehensive API testing | N/A | |

| Permissions | YES | |

| Testing with existing/popular addons | YES | |

| Security | Security is in charge of Matt Wobensmith. We should contact his team to see if security testing is necessary for current feature. | |

| 3rd-party security review | YES | Security team will help with it |

| Privilege escalation testing | NO | |

| Fuzzing | NO | |

| Web Compatibility | depends on the feature | |

| Testing against target sites | NO | |

| Survey of many sites for compatibility | NO | |

| Interoperability | depends on the feature | |

| Common protocol/data format with other software: specification available. Interop testing with other common clients or servers. | NO | |

| Coordinated testing/interop across the Firefoxes: Desktop, Android, iOS | NO | |

| Interaction of this feature with other browser features | NO |

Test suite

Full Test suite - Link with the gdoc, follow the format from link Smoke Test suite - Link with the gdoc, follow the format from link Regression Test suite - Link with the gdoc - if available/needed.

Bug Work

Meta: Bug 1269956 - [meta] Firefox Download Panel UX Redesign

Logged bugs ( blocking 1269956 )

35 Total; 3 Open (8.57%); 20 Resolved (57.14%); 12 Verified (34.29%);

Bug Verification (blocking 1269956 )

20 Total; 0 Open (0%); 8 Resolved (40%); 12 Verified (60%);

Sign off

Criteria

Check list

- All test cases should be executed

- Has sufficient automated test coverage (as measured by code coverage tools) - coordinate with RelMan

- All blockers, criticals must be fixed and verified or have an agreed-upon timeline for being fixed (as determined by engineering/RelMan/QA)

Results

Nightly testing

List of OSes that will be covered by testing

- Link for the tests run

Merge to Aurora Sign-off

List of OSes that will be covered by testing

- Link for the tests run

- Full Test suite

Checklist

| Exit Criteria | Status | Notes/Details |

|---|---|---|

| Testing Prerequisites (specs, use cases) | [IN PROGRESS] | |

| Testing Infrastructure setup | NO | |

| Test Plan Creation | [IN PROGRESS] | |

| Test Cases Creation | [IN PROGRESS] | |

| Full Functional Tests Execution | [NOT STARTED] | |

| Automation Coverage | [NOT STARTED] | |

| Performance Testing | [NOT STARTED] | |

| All Defects Logged | [NOT STARTED] | |

| Critical/Blockers Fixed and Verified | [NOT STARTED] | |

| Daily Status Report (email/etherpad statuses/ gdoc with results) | [NOT STARTED] | |

| Metrics/Telemetry | [NOT STARTED] | |

| QA Signoff - Nightly Release | [NOT STARTED] | |

| QA Aurora - Full Testing | [NOT STARTED] | |

| QA Signoff - Aurora Release | [NOT STARTED] | |

| QA Beta - Full Testing | [NOT STARTED] | |

| QA Signoff - Beta Release | [NOT STARTED] |