Foundation/AI

In 2019, Mozilla Foundation decided that a significant portion of its internet health programs would focus on AI topics. This wiki provides an overview of the issue as we see it, our theory of change and Mozilla's programmatic pursuits for 2020. Above all, it opens the door to collaboration from others.

Watch our January, 2020 All Hands Plenary for more information.

Trustworthy AI Brief V0.9

A downloadable version of the issue brief is available here: https://mzl.la/AIIssueBrief. For earlier versions see v0.1, v0.6, and v0.61.

In 2019, Mozilla Foundation decided that a significant portion of its internet health programs would focus on AI topics. This brief offers an update and opens the door to collaboration from others.

Summary

Current debates about AI often skip over a critical question: is AI enriching the lives of human beings?

AI has immense potential to improve our quality of life: teeing up the perfect song; optimizing the delivery of goods; solving medical mysteries. But adding AI to the digital products we use everyday can equally compromise our security, safety and privacy. Time and again, concerning stories regarding AI, big data and targeted marketing are hitting the news. The public is losing trust in big tech yet doesn’t have any alternatives. There is much at stake.

Mozilla believes we need to ensure that the use of AI in consumer technology enriches the lives of human beings rather than harms them. We need to build more trustworthy AI. For us, this means two things: personal agency is a core part of how AI is built and integrated and corporate accountability is real and enforced. This will take AI in a direction different than where it’s headed now.

The best way to make this happen is to work like a movement: collaborating with citizens, companies, technologists, governments and organizations around the world working to make ‘trustworthy AI’ a reality. This is Mozilla’s approach. We already have collaborative projects underway in four areas:

- Helping developers build more trustworthy AI, collaborating with Pierre Omidyar and others to put $3.5 million behind professors integrating ethics into computer science curriculum.

- Generating interest and momentum around trustworthy AI technology, backing innovators working on ideas like data trusts and working on open source voice technology.

- Building consumer demand -- and encouraging consumers to be demanding, starting with resources like our Privacy Not Included guide and pushing platforms to tackle misinformation.

- Encouraging governments to promote trustworthy AI, including work by Mozilla Fellows to map out a policy and litigation agenda that taps into current momentum in Europe.

These projects are just a sample -- and just a start -- on how we hope to move the ball forward through this collaborative strategy. We have more in the works.

2020 Collaborative Roadmap to Trustworthy AI

Mozilla’s roots are as a community driven organization that works with others. We are constantly looking for allies and collaborators to work with on our trustworthy AI efforts. As a part of this, we are looking for AI experts to join our program advisory board.

What is trustworthy?

Our definition of trustworthy AI is encompassed by two key concepts: agency and accountability. We will know we have built and designed AI that is serving rather than harming humanity when:

All AI is designed with personal agency in mind. Privacy, transparency and human wellbeing are key considerations.

and

Companies are held to account when their AI systems make discriminatory decisions, abuse data, or make people unsafe.

Mozilla is a part of a growing chorus of voices calling for a better direction for AI. Dozens of groups have put out principles and guidelines describing what this might look like. We’re excited to see this momentum and to work with others to make this vision a reality. See AI goals framework in appendix.

What’s at stake?

AI is playing a role in everything from directing our attention to deciding who gets mortgages to solving complex human problems. This will have a big impact on humanity. The stakes include:

- Privacy: Our personal data powers everything from traffic maps to targeted advertising.

Trustworthy AI should let people decide how their data is used and what decisions are made with it.

- Fairness: We’ve seen time and again that historical bias can show up in automated decision making.

To effectively address discrimination, we need to look closley at the goals and data that fuel our AI.

- Trust: Algorithms on sites like YouTube often push people towards extreme, misleading content.

Overhauling these content recommendation systems could go a long way to curbing misinformation.

- Safety: Experts have raised the alarm that AI could increase security risks and cyber crime. Platform

developers will need to create stronger measures to protect our data and personal security.

- Transparency: Automated decisions can have huge personal impact, yet the reasons for decisions

are often opaque. We need breakthroughs in explainability and transparency to protect users.

Many people do not understand how AI regularly touches our lives and feel powerless in the face of these systems. Mozilla is dedicated to making sure the public understands that we can and must have a say in when machines are used to make important decisions – and shape how those decisions are made.

How do we move the ball forward?

1. Help developers build more trustworthy AI.

Goal: developers increasingly build things using trustworthy AI guidelines and technologies.

What we’re doing now: working with professors at 17 universities across the US to develop curriculum on ethics and responsible design for computer science undergraduates.

Where we need help: we are looking for partners to scale this work in Europe and Asia, and to find ways to work with developers, designers and project managers already working in the industry.

2. Generate interest and momentum around trustworthy AI technology.

Goal: trustworthy AI products and services (personal agents, data trusts, offline data, etc.) are increasingly embraced by early adopters and attract investment.

What we’re doing now: developing open source voice technology for others to build on, and supporting Mozilla Fellows and others doing early pilot work on concepts like data trusts.

Where we need help: we’re looking for people with novel yet pragmatic ideas on how to make trustworthy AI a reality. We also want to meet and learn from investors in this space.

3. Build consumer demand -- and encourage consumers to be demanding.

Goal: consumers choose trustworthy products when available and call for them when they aren’t.

What we’re doing now: highlighting trustworthy products through our Privacy Not Included buyer’s guide, and pushing platforms like YouTube and PayPal for AI and data related product changes.

Where we need help: we’re looking for more trustworthy products to highlight, and for people both inside and outside major tech companies who can help us drive product improvements.

4. Encourage governments to promote trustworthy AI.

Goal: new and existing laws are used to make the AI ecosystem more trustworthy.

What we’re doing now: building more momentum for trustworthy AI and better data protection in Europe through Mozilla Fellows, partner orgs and lobbying across the region.

Where we need help: we’re looking for additional partners to help us sharpen our thinking on where we can have the most impact on the current political window of opportunity in Europe.

About Mozilla

Mozilla exists to guard the open nature of the internet and to ensure it remains a global public resource, open and accessible to all. Founded as a community open source project in 1998, Mozilla currently consists of two organizations: the 501(c)3 Mozilla Foundation, which leads our movement building work; and its wholly owned subsidiary, the Mozilla Corporation, which leads our market-based work. The two organizations work in concert with each other and a global community of tens of thousands of volunteers under the single banner: Mozilla.

The ‘trustworthy AI’ activities outlined in this document are primarily a part of the movement activities housed at the Mozilla Foundation -- efforts to work with allies around the world to build momentum for a healthier digital world. These include: thought leadership efforts like the Internet Health Report and the annual Mozilla Festival; $7M in fellowships and awards for technologists, policy makers, researchers and artists; and campaigns to mobilize public awareness and demand for more responsible tech products. Approximately 60% of the $25M/year invested in these efforts is focused on trustworthy AI.

Mozilla’s roots are as a collaborative, community driven organization. We are constantly looking for allies and collaborators to work with on our trustworthy AI efforts.

For more on Mozilla’s values, see: [1]. Our Trustworthy AI goals framework builds on key manifesto principles including agency (principle 5), transparency (principle 8) and building an internet that enriches the lives of individual human beings (principles 3).

For more on Trustworthy AI programs, see [2]

Theory of Change

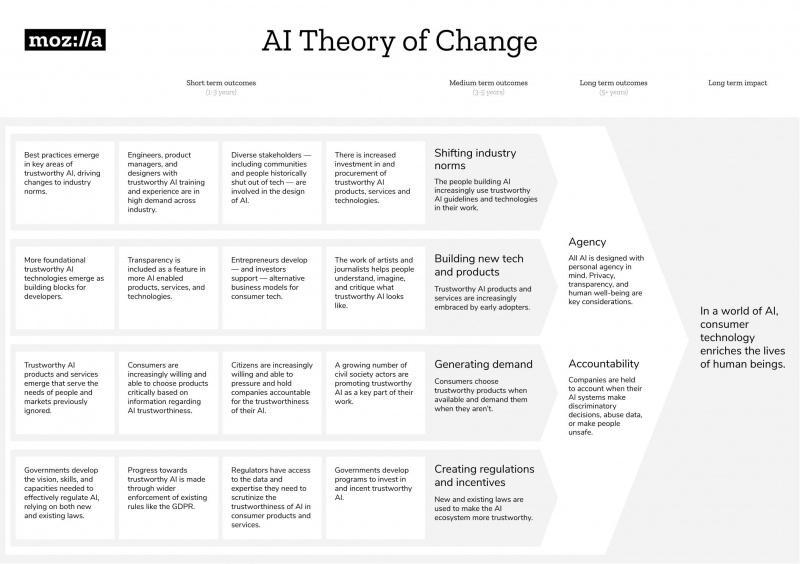

The Theory of Change update will enable Mozilla & our allies to take both coordinated and decentralized action in a shared direction, towards collective impact on trustworthy AI.

It seeks to define:

- Tangible changes in the world we and others will pursue (aka long term outcomes)

- Strategies that we and others might use to pursue these outcomes

- Results we will hold ourselves accountable to

Many people have tried to come up with the right word to describe what 'good AI' looks like -- ethical, responsible, healthy.

The term we find most useful is 'trustworthy AI', as used by the European High Level Expert Group on AI. Mozilla's simple definition is:

"AI that is demonstrably worthy of trust. Privacy, transparency and human well being are key design considerations - and there is accountability for any harms that may be caused. This applies not just to AI systems themselves, but also the deployment and results of such systems."

We plan to use this term extensively, including in our theory of change and strategy work.

2020 OKRs

MoFo 2020 OKRs [draft - March 27, 2020]

The following outlines the organization wide objectives and key results (OKRs) for Mozilla Foundation for 2020.

Theory of change

These objectives have been developed as a part of a year long strategy process that included the creation of a multi-year theory of change for Mozilla’s trustworthy AI work. The majority of objectives are tied directly to one or more short term (1 - 3 year) outcomes in the theory of change.

Partnerships

Mozilla Foundation’s overall focus is on growing the movement of organizations around the world committed to building a healthier internet. A key assumption behind this work is that Mozilla maintains a small staff that is skilled at partnering, with most of its resources going into networking and supporting individuals and organizations within the movement. The 2020 OKRs include a strong focus on deepening our partnership practice.

Below is a bulleted list of our OKRs. You can read more about them here.

1. Thought Leadership

Short Term Outcome: Clear "Trustworthy AI" guidelines emerge, leading to new and widely accepted industry norms.

2020 Objective: Test out our theory of change in ways that both give momentum to other orgs taking concrete action on trustworthy AI and establish Mozilla as a credible thought leader.

Key Results:

- Publish a whitepaper theory of change (H1)

- 250 people and organizations participate in mapping to show who is working on key elements of trustworthy AI and offer feedback on the whitepaper

- 25 collaborations with partners working on concrete projects that align with short term outcomes in the theory of change

2. Data Stewardship

Short Term Outcome: More foundational trustworthy AI technologies emerge as building blocks for developers (e.g. data trusts, edge data, data commons).

2020 Objective: Increase the number of data stewardship innovations that can accelerate the growth of trustworthy AI.

Key Results:

- $3 million raised to support bold, multi-year, cross movement initiatives on data stewardship as an indicator of growing philanthropic support in this area.

- 10 awards or fellowships for prototypes or other concrete exploration re: data stewardship.

- 4 concentric “networks of practice” utilize Mozilla-housed Data x Lab

3. Consumer Power

Short Term Outcome: Citizens are increasingly willing and able to pressure and hold companies accountable for the trustworthiness of their AI.

2020 Objective: Mobilize an influential consumer audience using pivotal moments to pressure companies to make ‘consumer AI’ more trustworthy.

Key Results:

- 3m page views to ‘trustworthy AI’ content on Mozilla channels (MoFo website, social media, YouTube, etc.).

- 50k new subscribers drawn from sources (partnerships, contextual advertising, etc.) oriented towards people ages 18-35.

- 25k people share information with us (stories, browsing data, etc.) in order to gather evidence about how AI currently works and what changes are needed.

4. Movement Building

Short Term Outcome: A growing number of civil society actors are promoting trustworthy AI as a key part of their work.

2020 Objective: Partner with diverse movements to deepen intersections between their primary issues and internet health, including trustworthy AI, so that we increase shared purpose.

Key Results:

- 30% increase in partners with whom we (have both) published, launched, or hosted something that includes shared approaches to their issues and internet health (e.g. shared language, methodologies, resources or events).

- 75% of partners from these diverse movements report deepening intersection between their issues and internet health/AI.

- 4 new partnerships in the Global South report deepened intersection between their work and ours.

Timeline

Trustworthy AI fits within the big picture internet health issues that we have been tackling collectively over the past few years. And, over the last 18 months we've been collaboratively working to make this effort crisper.

Below, is the paper trail of these efforts, they collectively tell the story of how Mozilla got to this goal and why.

Existing MoFo Theory of Change (January 2018)

- Mozilla's long term strategy for making the internet a healthier place. The new theory of change shared above built on this earlier work.

Impact goal summary (November 2018)

- Summarizes our recommendation to Mozilla's Board of Directors on why the impact goal focus should be 'better machine decision making' (now, trustworthy AI).

Better machine decision making issue brief (November 2018)

- Describes how Mozilla understands the issue of machine decision making and the beginning of a roadmap on areas for improvement to get us to 'better'.

Slowing Down, Asking Questions, Looking Ahead (November 2018)

- This blog summarizes the two resources above -- the 'why' of trustworthy AI and how we got here.

Mozilla, AI and internet health: an update (March 2019)

- This blog post draws direct connections between our movement building theory of change and how trustworthy AI fits into that. It answers the question: how will we shape the agenda, rally citizens and connect leaders around trustworthy AI?

Why AI + consumer tech? (April 2019)

- In April, 2019 we narrowed in on consumer technology as the key area where Mozilla can have the biggest impact in the AI field.

Consider this: AI and Internet Health (May 2019)

- This blog explores the aspects of consumer technology that Mozilla should focus on. The list had been narrowed to: accountability; agency; rights; and open source.

Update: Digging Deeper on ‘Trustworthy AI’ (August 2019)

- Here, we share our long term outcomes and long term trustworthy AI goal. We had landed on agency and accountability as our outcomes and "in a world of AI, consumer technology enriches the lives of human beings" as our goal.

Privacy, Pandemics and the AI Era (March 2020)

- This blog explores the connection between the COVID-19 pandemic, and the technological solutions being proposed. These issues are central to the long term impact of AI.

Privacy Norms and the Pandemic (April 2020)

- Similar to the post above, here we explore the long term data governance implications of technology deployment during the pandemic.

You can read more about the background for this project here.