B2G/QA/2014-10-20 Performance Acceptance

2014-10-20 Performance Acceptance Results

Overview

These are the results of performance release acceptance testing for FxOS 2.1, as of the Oct 20, 2014 build.

Our acceptance metric is startup time from launch to visually-complete, as metered via the Gaia Performance Tests, with the system initialized to make reference-workload-light.

For this release, there are two baselines being compared to: 2.0 performance and our responsiveness guidelines targeting no more than 1000ms for startup time.

The Gecko and Gaia revisions of the builds being compared are:

2.0:

- Gecko: mozilla-b2g32_v2_0/c17df9fe087d

- Gaia: 9c7dec14e058efef81f2267b724dad0850fc07e4

2.1:

- Gecko: mozilla-b2g34_v2_1/12dc9b782f2a

- Gaia: 2904ab80816896f569e2d73958427fb82aebaea5

Note when comparing to past reports that the test timings changed with Bug 1079700. Baselines for 2.0 have been regenerated with updated timing code, and are faster in a few cases than in the previous comparison. Where this is significant, it will be noted as a faster 2.0 time will be assumed to mean the 2.1 results were also affected by the timing changes, affecting study-over-study comparison.

This initial report is from half-length (240 data points maximimum) runs for 2.0 and 2.1 for expediency. It will be updated with full results for future baselines and any notable differences (unlikely) will be communicated.

Startup -> Visually Complete

Startup -> Visually Complete times the interval from launch when the application is not already loaded in memory (cold launch) until the application has initialized all initial onscreen content. Data might still be loading in the background, but only minor UI elements related to this background load such as proportional scroll bar thumbs may be changing at this time.

This is equivalent to Above the Fold in web development terms.

More information about this timing can be found on MDN.

Execution

These results were generated from 240 application data points per release, generated over 8 different runs of make test-perf as follows:

- Flash to base build

- Flash stable FxOS build from tinderbox

- Constrain phone to 319MB via bootloader

- Clone gaia

- Check out the gaia revision referenced in the build's sources.xml

- GAIA_OPTIMIZE=1 NOFTU=1 NO_LOCK_SCREEN=1 make reset-gaia

- make reference-workload-light

- For 8 repetitions:

- Reboot the phone

- Wait for the phone to appear to adb, and an additional 30 seconds for it to settle.

- Run make test-perf with 31 replicates

Result Analysis

First, any repetitions showing app errors are thrown out.

Then, the first data point is eliminated from each repetition, as it has been shown to be a consistent outlier likely due to being the first launch after reboot. The balance of the results are typically consistent within a repetition, leaving 30 data points per repetition.

These are combined into a large data point set. Each set has been graphed as a 32-bin histogram so that its distribution is apparent, with comparable sets from 2.0 and 2.1 plotted on the same graph.

For each set, the median and the 95th percentile results have been calculated. These are real-world significant as follows:

- Median

- 50% of launches are faster than this. This can be considered typical performance, but it's important to note that 50% of launches are slower than this, and they could be much slower. The shape of the distribution is important.

- 95th Percentile (p95)

- 95% of launches are faster than this. This is a more quality-oriented statistic commonly used for page load and other task-time measurements. It is not dependent on the shape of the distribution and better represents a performance guarantee.

Distributions for launch times are positive-skewed asymmetric, rather than normal. This is typical of load-time and other task-time tests where a hard lower-bound to completion time applies. Therefore, other statistics that apply to normal distributions such as mean, standard deviation, confidence intervals, etc., are potentially misleading and are not reported here. They are available in the summary data sets, but their validity is questionable.

On each graph, the solid line represents median and the broken line represents p95.

Pass/Fail Criteria

Pass/Fail is determined according to our documented release criteria for 2.1. This boils down to launch time being under 1000 ms.

Median launch time has been used for this, per current convention. However, as mentioned above, p95 launch time might better capture a guaranteed level of quality for the user. In cases where this is significantly over 1000 ms, more investigation might be warranted.

Results

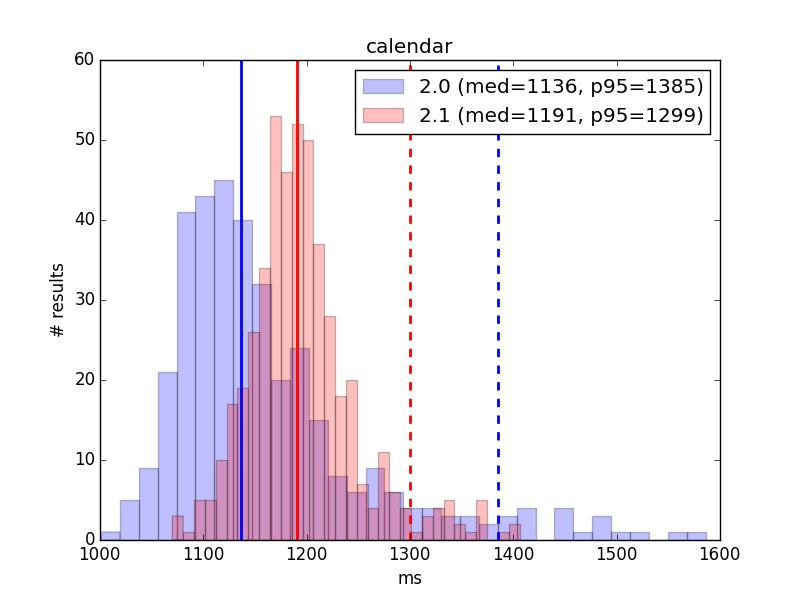

Calendar

2.0

- 240 data points

- Median: 1139 ms

- p95: 1385 ms

2.1

- 240 data points

- Median: 1191 ms

- p95: 1320 ms

Result: FAIL (small regression, over guidelines)

Comment: Calendar's times showed an improvement from the last study, coming out about 75 ms ahead. However, results still show a small regression from 2.0, and remain well over the 1000 ms guidelines, even for best case.

The p95 behavior has improved as well, both over the last study and 2.0, suggesting more consistent performance.

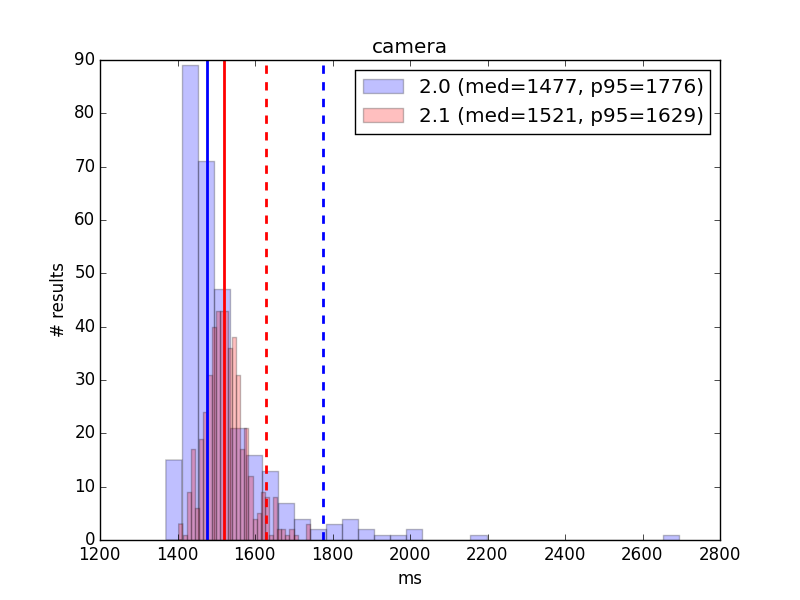

Camera

2.0

- 180 data points

- Median: 1479 ms

- p95: 1738 ms

2.1

- 240 data points

- Median: 1525 ms

- p95: 1629 ms

Result: FAIL (over guidelines)

Comment: Camera's 2.1 results have improved by ~50 ms from the last study.

While Camera does show a ~45 ms regression from 2.0 per these results, investigation by the developers have shown this to not reflect an actual regression. Rather, the investigation found that a change in how the calculation was made occurred between the two branches, and that Camera's real-world performance has actually significantly improved between the branches. It does remain over the absolute release acceptance guidelines.

The p95 behavior has improved significantly from both the last study and the 2.0 results, suggesting more consistent performance.

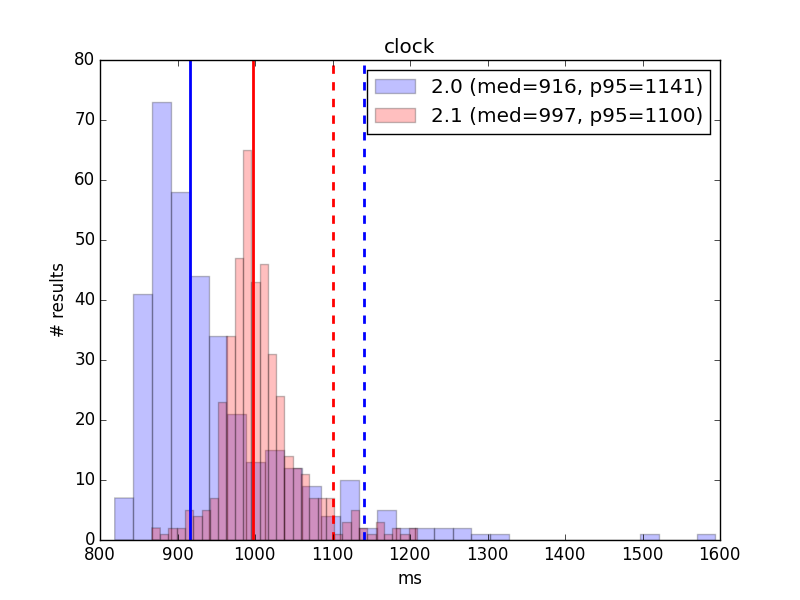

Clock

2.0

- 240 data points

- Median: 915 ms

- p95: 1143 ms

2.1

- 210 data points

- Median: 999 ms

- p95: 1096 ms

Result: PASS

Comment: Clock's median startup performance has improved from the last study by nearly 50 ms. The improvement is sufficient to put Clock barely within acceptance guidelines, though it does still show an ~85 ms regression from 2.0.

The p95 behavior has improved from both the last study and 2.0 and suggests more consistently good results.

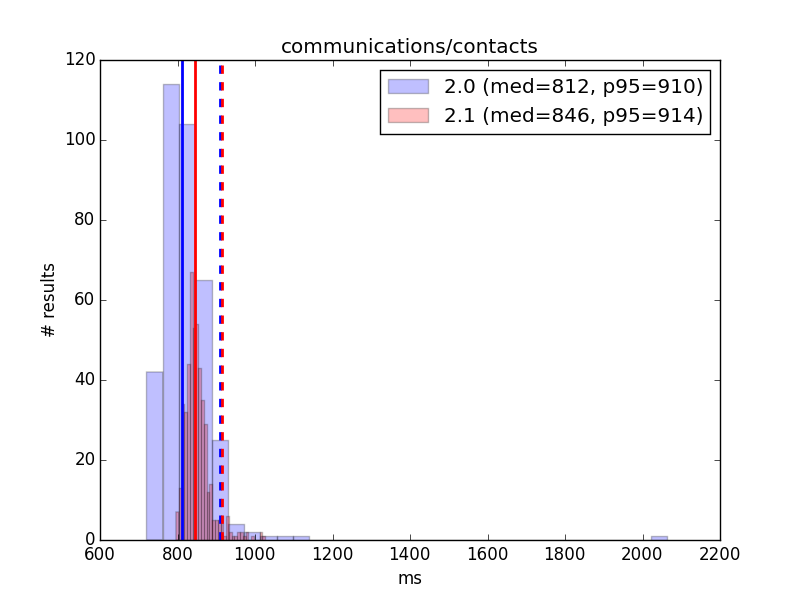

Contacts

2.0

- 240 data points

- Median: 807 ms

- p95: 905 ms

2.1

- 240 data points

- Median: 844 ms

- p95: 909 ms

Result: PASS

Comment: Contacts remains well within acceptance guidelines. While the median (but not p95) has regressed from 2.0, both are significantly better than as measured in the last study.

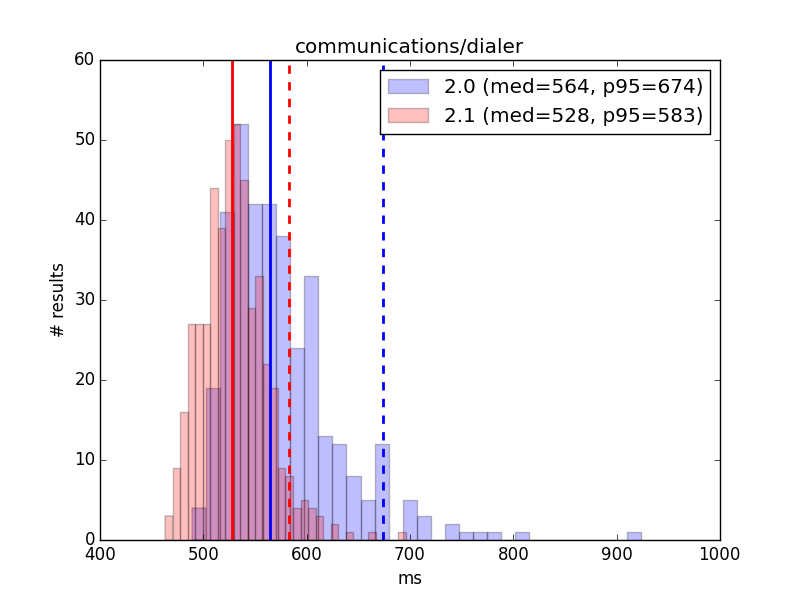

Dialer

2.0

- 240 data points

- Median: 560 ms

- p95: 674 ms

2.1

- 240 data points

- Median: 529 ms

- p95: 571 ms

Result: PASS

Comment: Dialer remains well within guidelines. Even so, its median startup has improved significantly since the last study, by over 100 ms. Its p95 performance improved even more, by almost 150 ms, suggesting more consistently good performance.

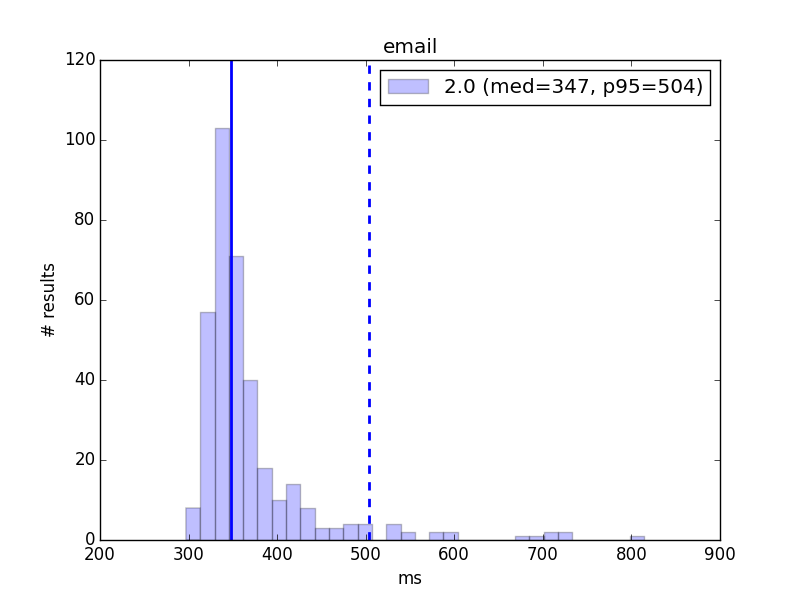

2.0

- 240 data points

- Median: 347 ms

- p95: 494 ms

Result: N/A

Comment: Email has been removed from the 2.1 test manifest. New 2.0 baseline results are given here, but this application will be eliminated in future reports unless the test is restored.

However, 2.1 is almost certainly well under launch requirement guidelines with this test and should not be a concern.

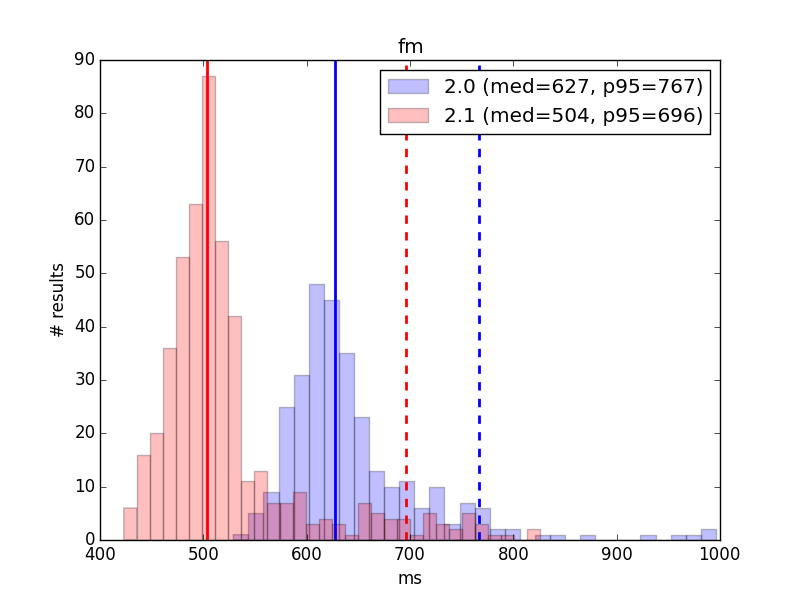

FM Radio

2.0

- 180 data points

- Median: 629 ms

- p95: 771 ms

2.1

- 240 data points

- Median: 503 ms

- p95: 719 ms

Result: PASS

Comment: FM Radio remains well within guidelines. Its numbers have shown a large improvement since the last study, with median startup improving by almost 200 ms and p95 startup improving by around 150 ms.

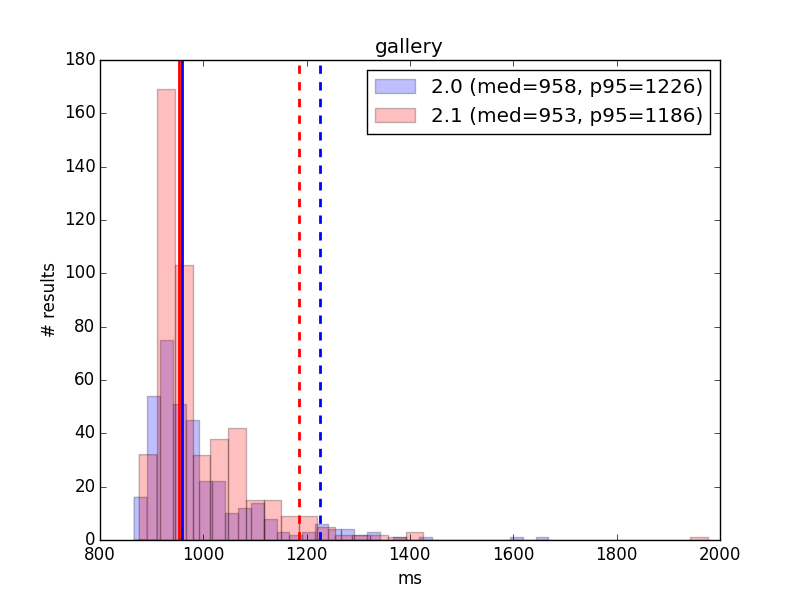

Gallery

2.0

- 240 data points

- Median: 956 ms

- p95: 1225 ms

2.1

- 240 data points

- Median: 954 ms

- p95: 1186 ms

Result: PASS

Comment: Gallery's median startup performance has improved by over 50 ms since the last study, with the p95 performance improving only slightly. Performance is now virtually identical to 2.0, and within acceptance guidelines.

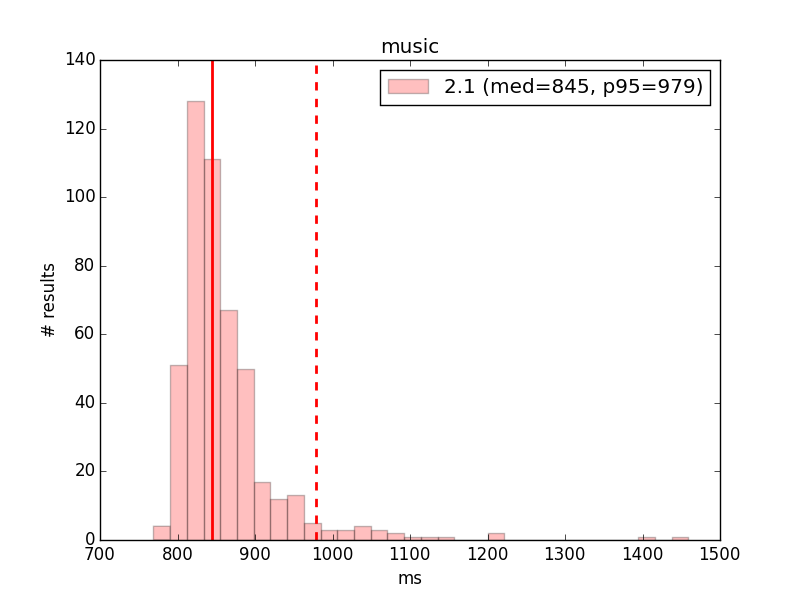

Music

2.1

- 240 data points

- Median: 846 ms

- p95: 967 ms

Result: PASS

Comment: Music is not tested in 2.0. Startup number have improved radically for 2.1 since the last study, by around 250 ms for both median and p95. Results are now unimodal, suggesting that the patch mentioned above fixed timing issues for this application, and these numbers do correspond with the "faster" mode of the previous bimodal results. It is well within release acceptance guidelines.

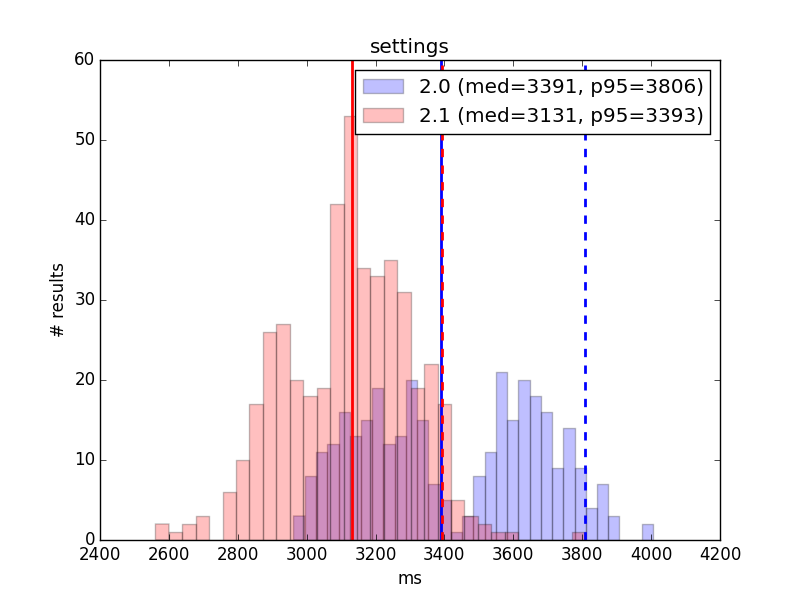

Settings

2.0

- 240 data points

- Median: 3385 ms

- p95: 3803 ms

2.1

- 210 data points

- Median: 3137 ms

- p95: 3398 ms

Result: FAIL (well over guidelines, significant regression from last comparison)

Comment: TBD

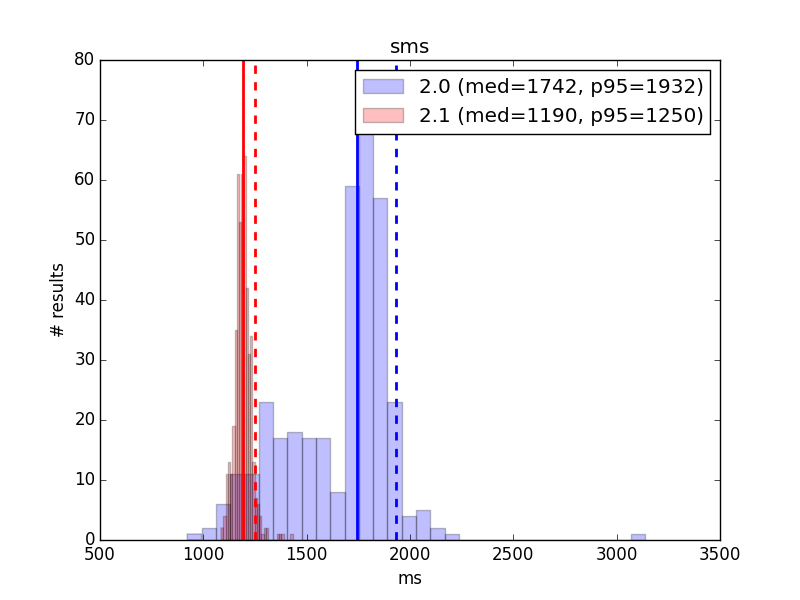

SMS

2.0

- 240 data points

- Median: 1739 ms

- p95: 1919 ms

2.1

- 240 data points

- Median: 1191 ms

- p95: 1251 ms

Result: FAIL (over guidelines)

Comment: TBD

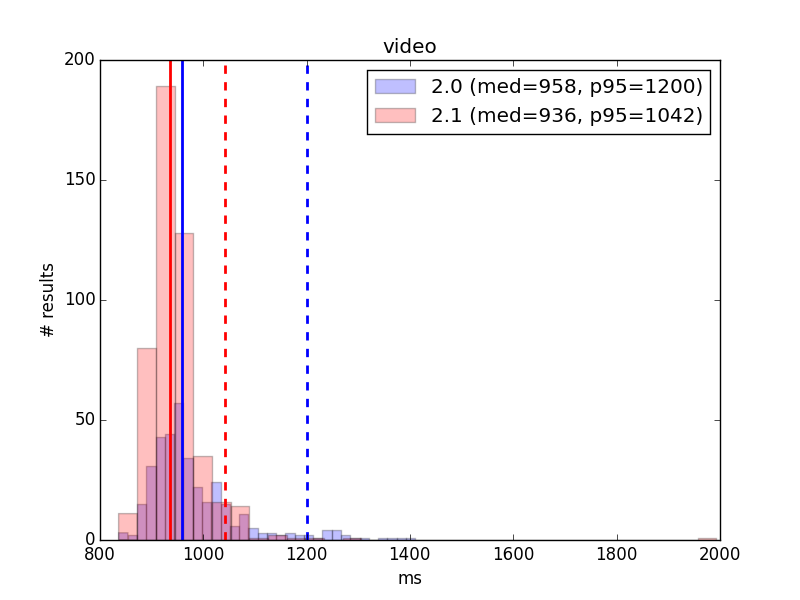

Video

2.0

- 240 data points

- Median: 960 ms

- p95: 1202 ms

2.1

- 240 data points

- Median: 935 ms

- p95: 1035 ms

Result: PASS

Comment: TBD

Raw Data

Will be added after full dataset is updated.